Detecting Users' Emotional States during Passive Social Media Use

ACM IMWUT 2024Abstract

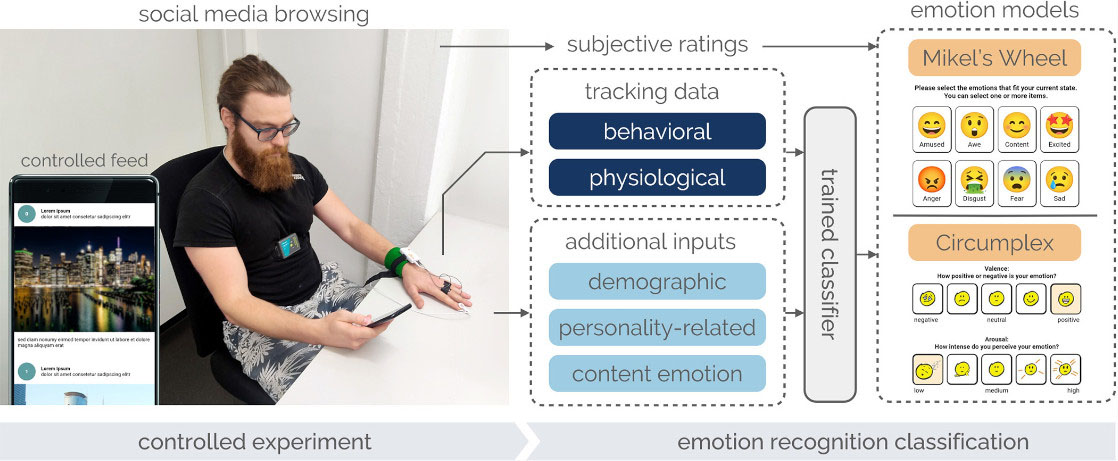

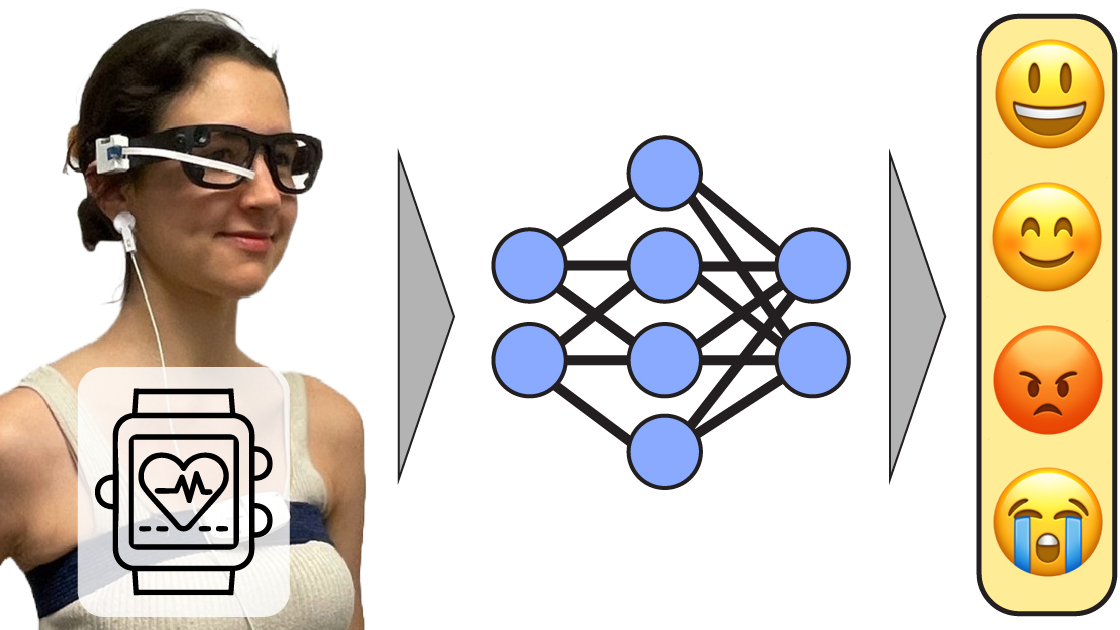

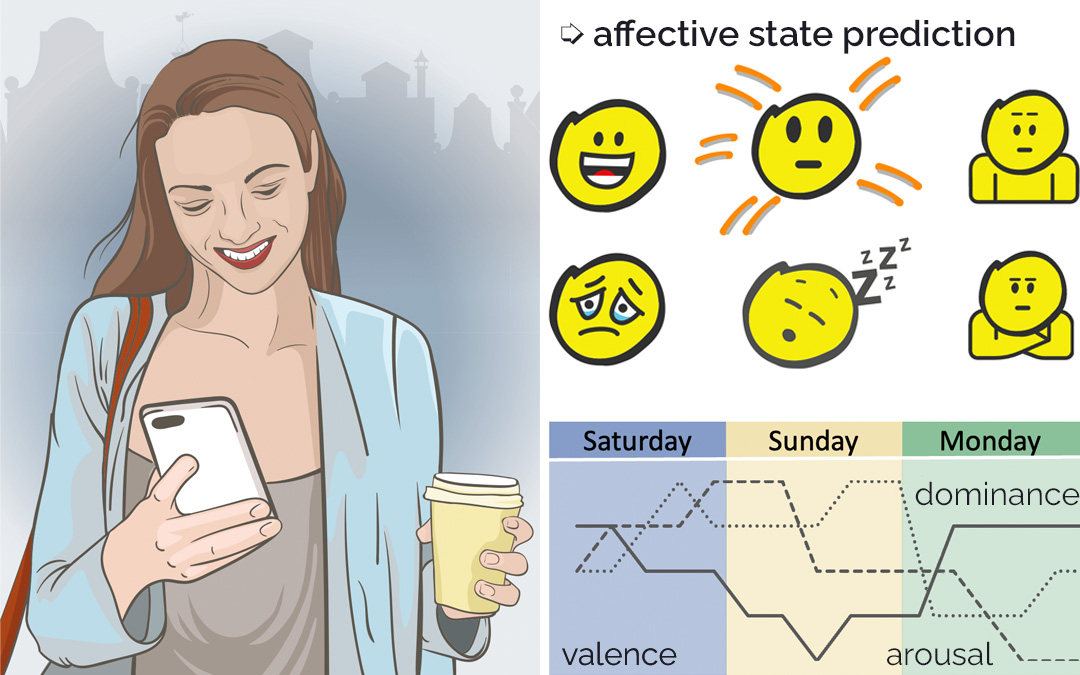

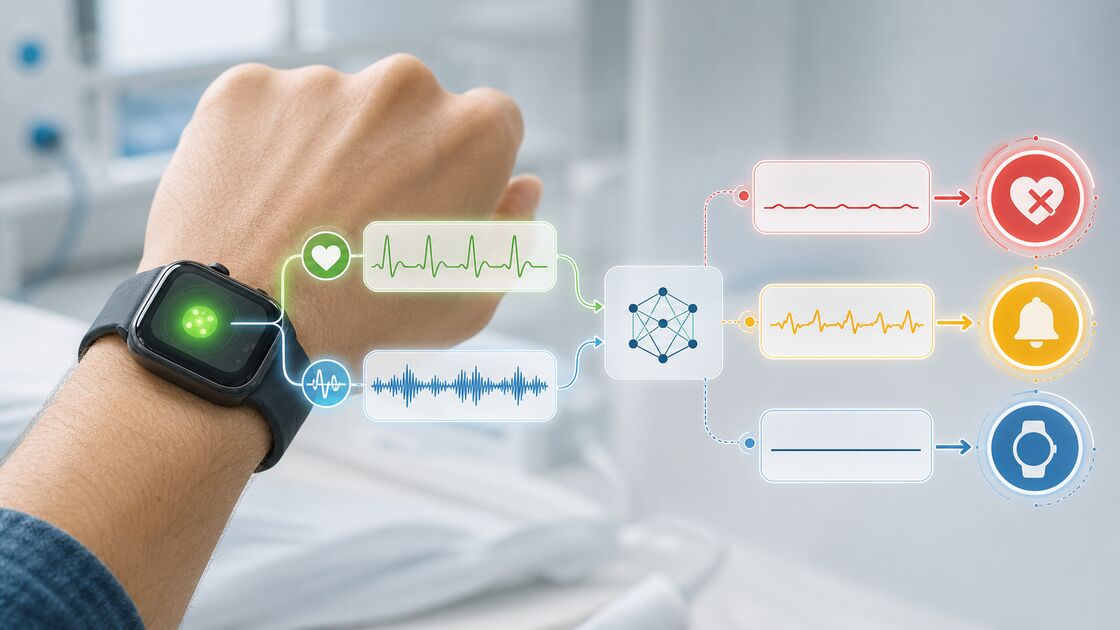

The widespread use of social media significantly impacts users’ emotions. Negative emotions, in particular, are frequently produced, which can drastically affect mental health. Recognizing these emotional states is essential for implementing effective warning systems for social networks. However, detecting emotions during passive social media use - the predominant mode of engagement - is challenging. We introduce the first predictive model that estimates user emotions during passive social media consumption alone. We conducted a study with 29 participants who interacted with a controlled social media feed. Our apparatus captured participants’ behavior and their physiological signals while they browsed the feed and filled out self-reports from two validated emotion models. Using this data for supervised training, our emotion classifier robustly detected up to 8 emotional states and achieved 83% peak accuracy to classify affect. Our analysis shows that behavioral features were sufficient to robustly recognize participants’ emotions. It further highlights that within 8 seconds following a change in media content, objective features reveal a participant’s new emotional state. We show that grounding labels in a componential emotion model outperforms dimensional models in higher-resolutional state detection. Our findings also demonstrate that using emotional properties of images, predicted by a deep learning model, further improves emotion recognition.

Video

Reference

Christoph Gebhardt, Andreas Brombach, Tiffany Luong, Otmar Hilliges, and Christian Holz. Detecting Users' Emotional States during Passive Social Media Use. In Proceedings on Interactive, Mobile, Wearable and Ubiquitous Technologies 2024 (ACM IMWUT).

Acknowledgments

We are grateful for the valuable feedback of Max Moebus and Shkurta Gashi on analysis design and paper drafts.