SIPLAB: Sensing, Interaction & Perception Lab

The SIPLAB is a research lab at ETH Zürich working on egocentric perception, computational interaction, and behavioral AI. We advance mixed reality, robotics, and human-computer interaction toward adaptive intelligence and human-aware systems. We are part of the Department of Computer Science and the AI Center.

egocentric perception

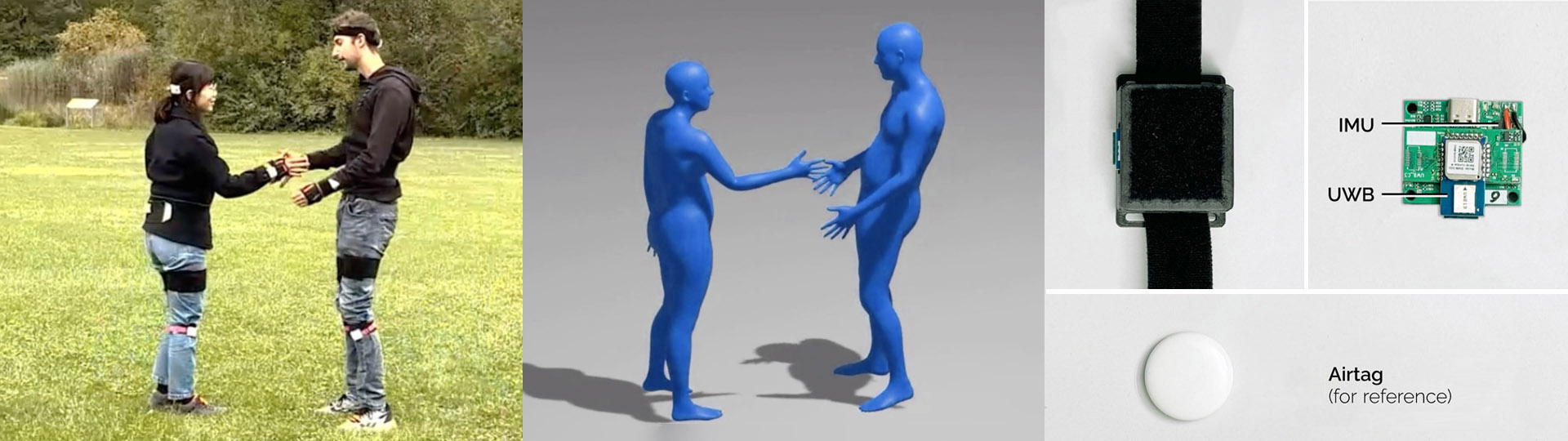

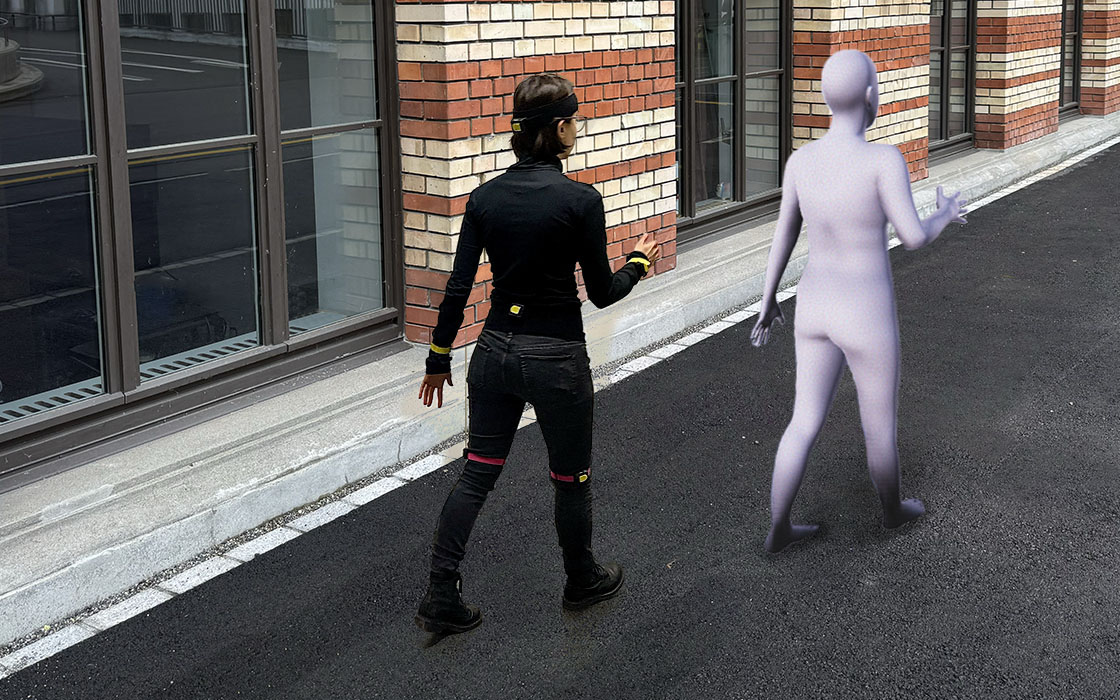

motion tracking, action understanding, and planning

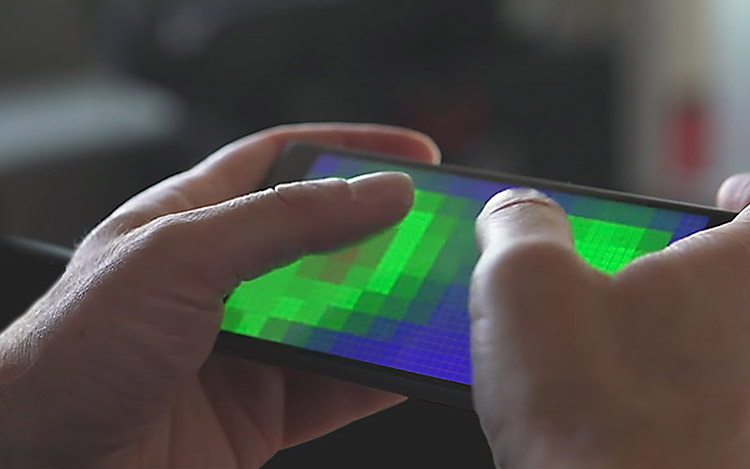

input decoding for adaptive systems

user modeling for computational interaction

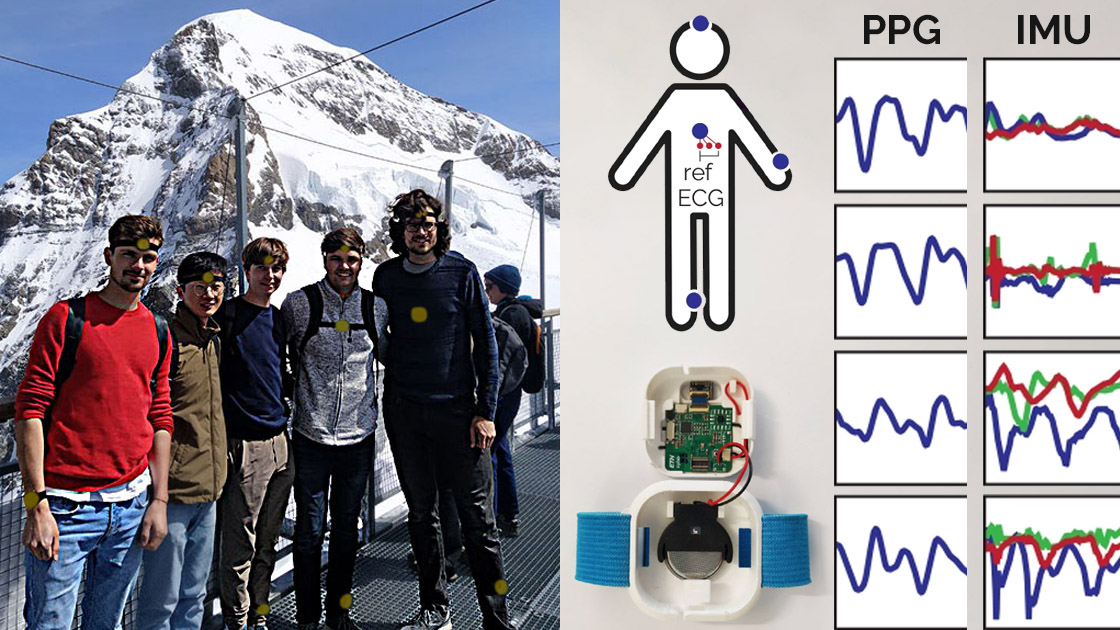

behavioral AI

human affect, emotions, and traits via digital biomarkers

Projects to appear and recent research

Capacitive Sensing on Existing 3D Objects

Retrofitting Curved Surfaces with Multi-Touch SensingUltra Diffusion Poser

Diffusion Motion Tracking from Sparse Inertial SensorsFrequency-Weighted Neural Kalman Filters

Affiliations and collaborations

- ETH AI Center

- ELLIS Unit Zürich

- Max Planck ETH Center for Learning Systems (CLS)

- Department of Computer Science (D-INF)

- Department of Information Technology & Electrical Engineering (D-ITET)

- Robotics, Systems and Control Program at ETH Zürich

- ETH Augmented Reality Research Hub (ETHAR)

- Human-Computer Interaction @ ETH Zürich (HCI@ETH)

- Competence Centre for Rehabilitation Engineering and Science (ETH RESC)

- Zurich Information Security & Privacy Center (ZISC)