Research areas

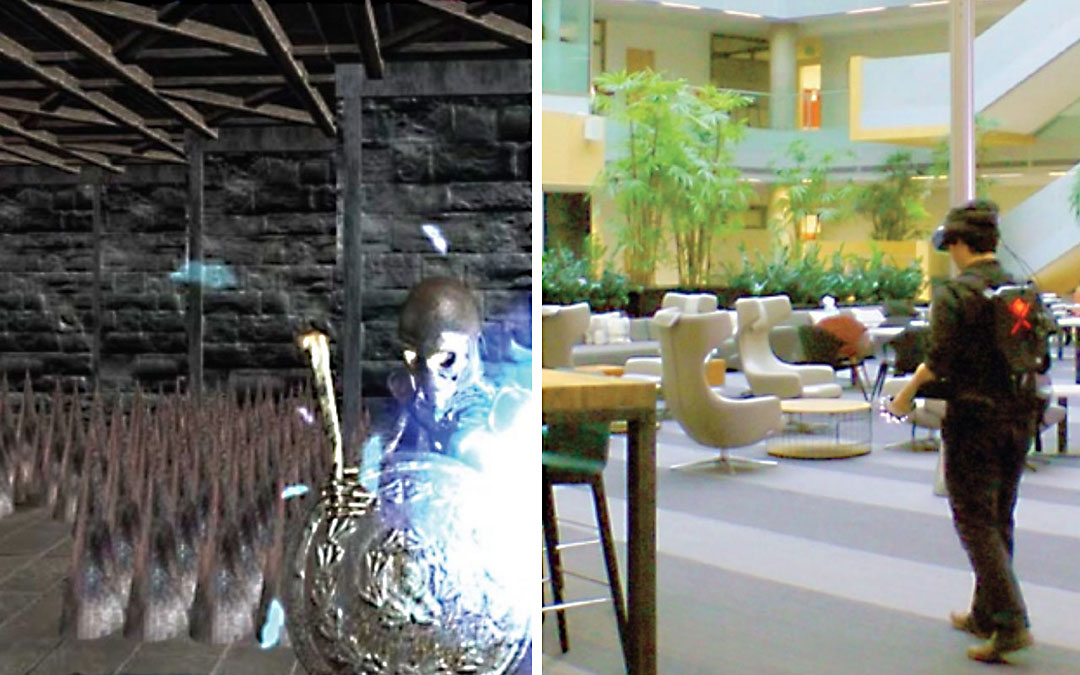

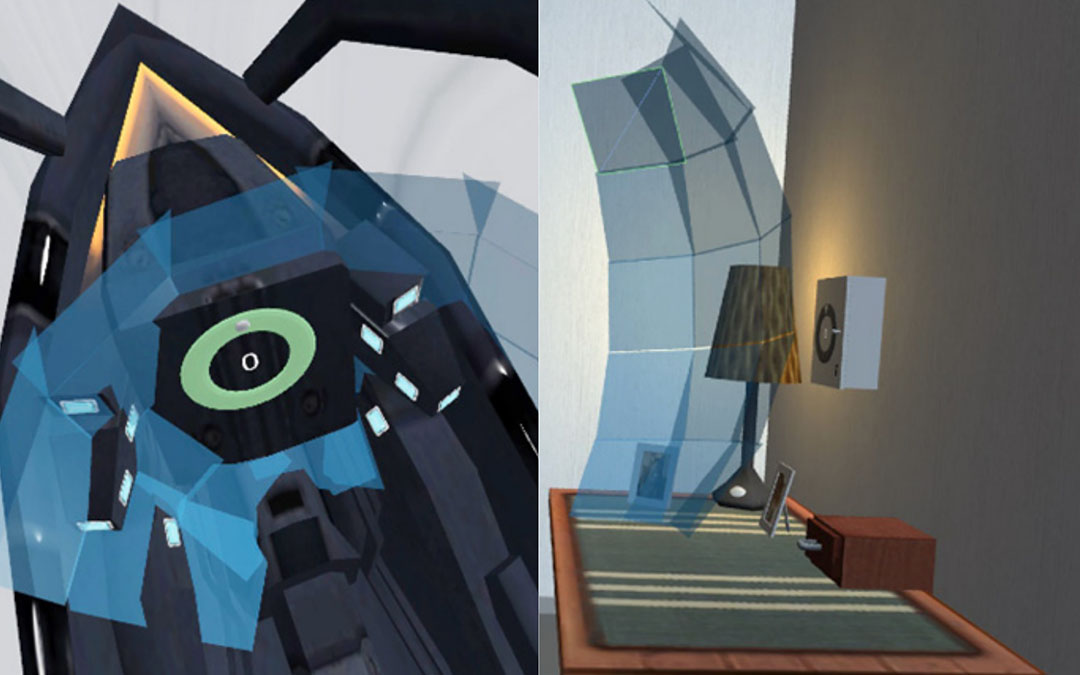

Adaptive XR

Context-aware mixed reality systems that adapt interfaces, environments, and behavior to users and situations.

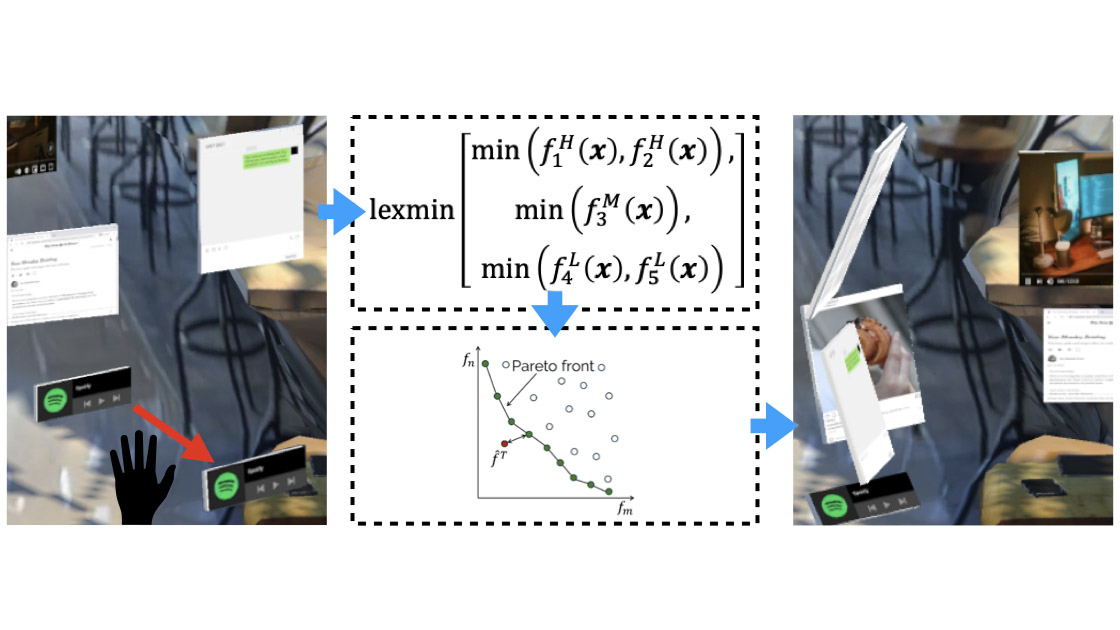

Preference-guided UI Adaptation

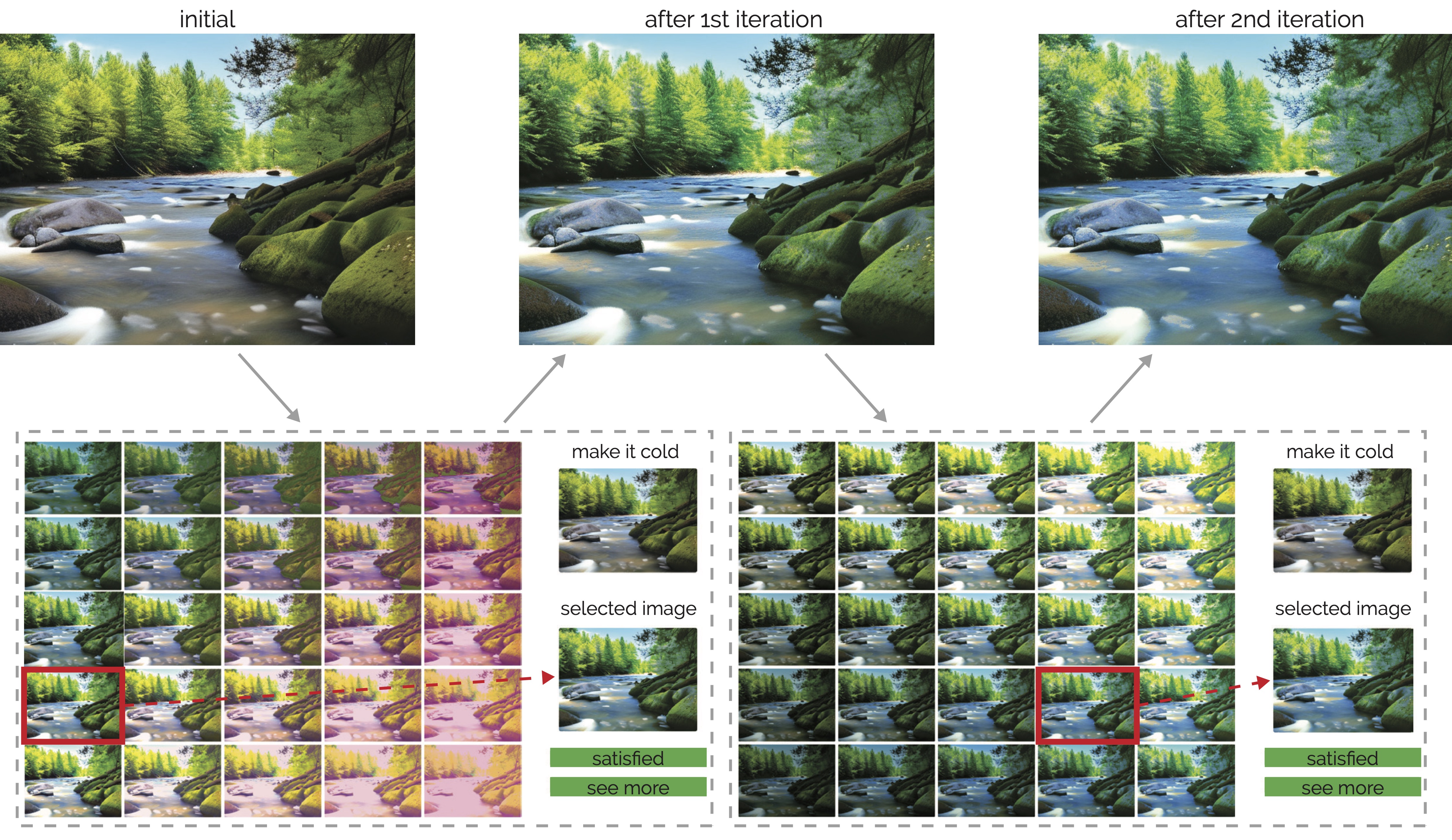

Efficient Visual Appearance Optimization

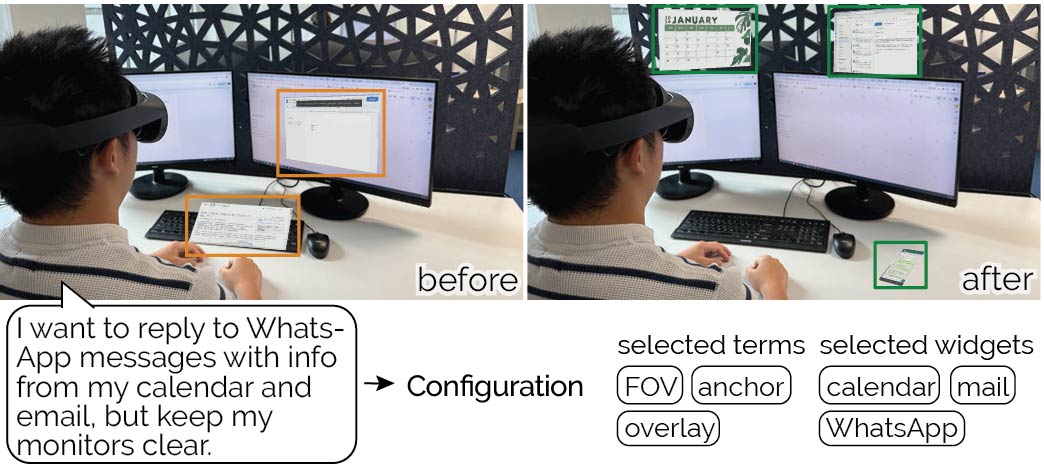

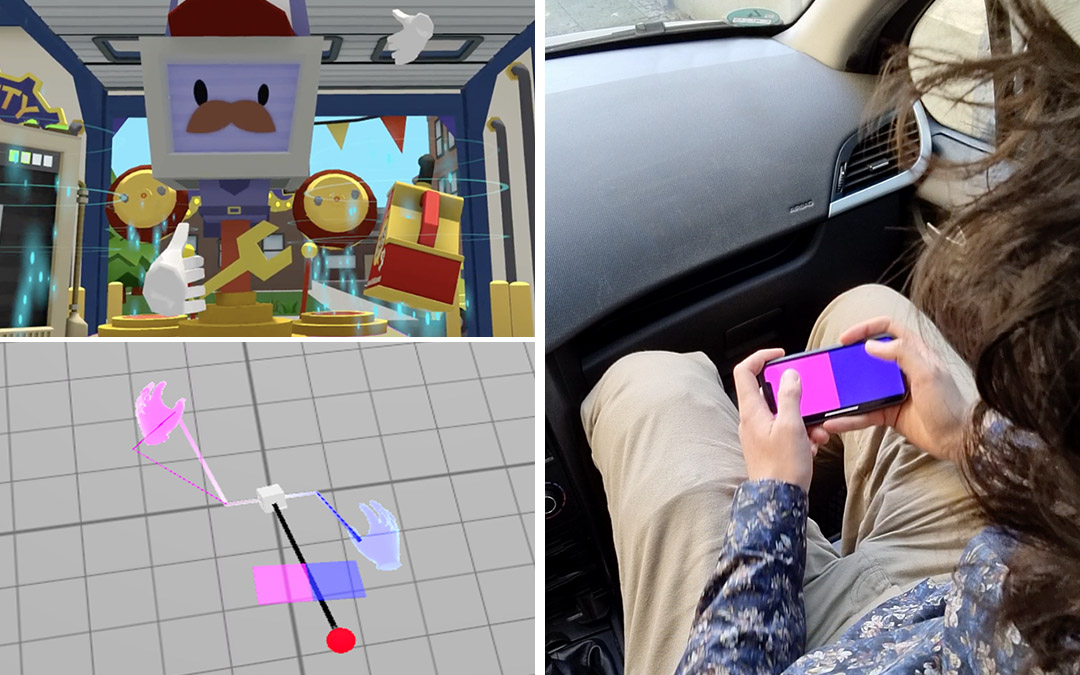

SituationAdapt

InteractionAdapt

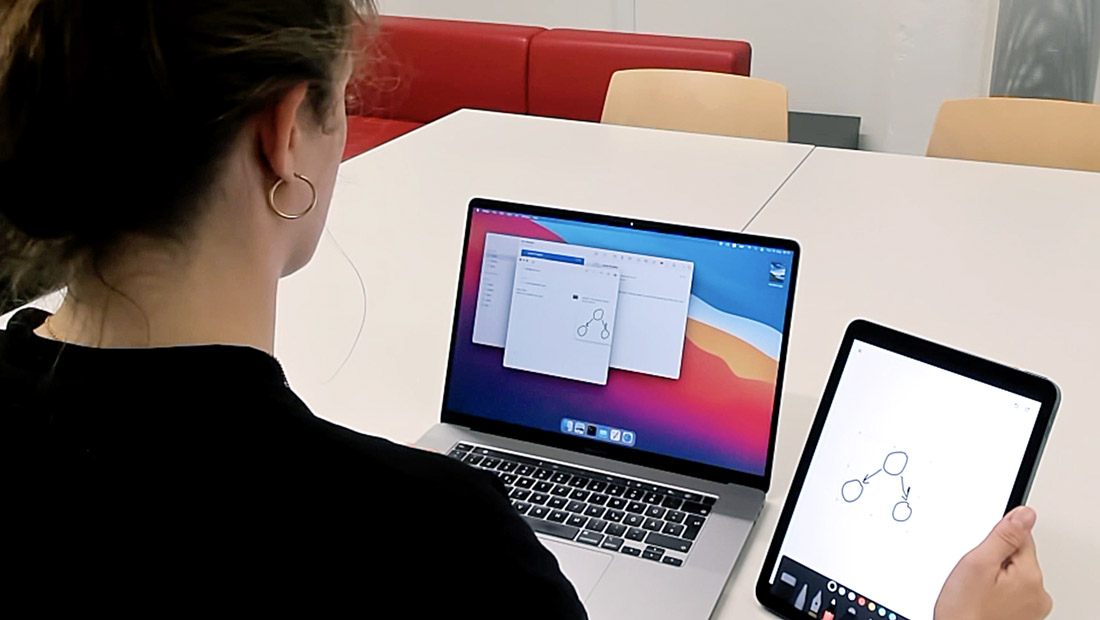

Cross-Device Shortcuts

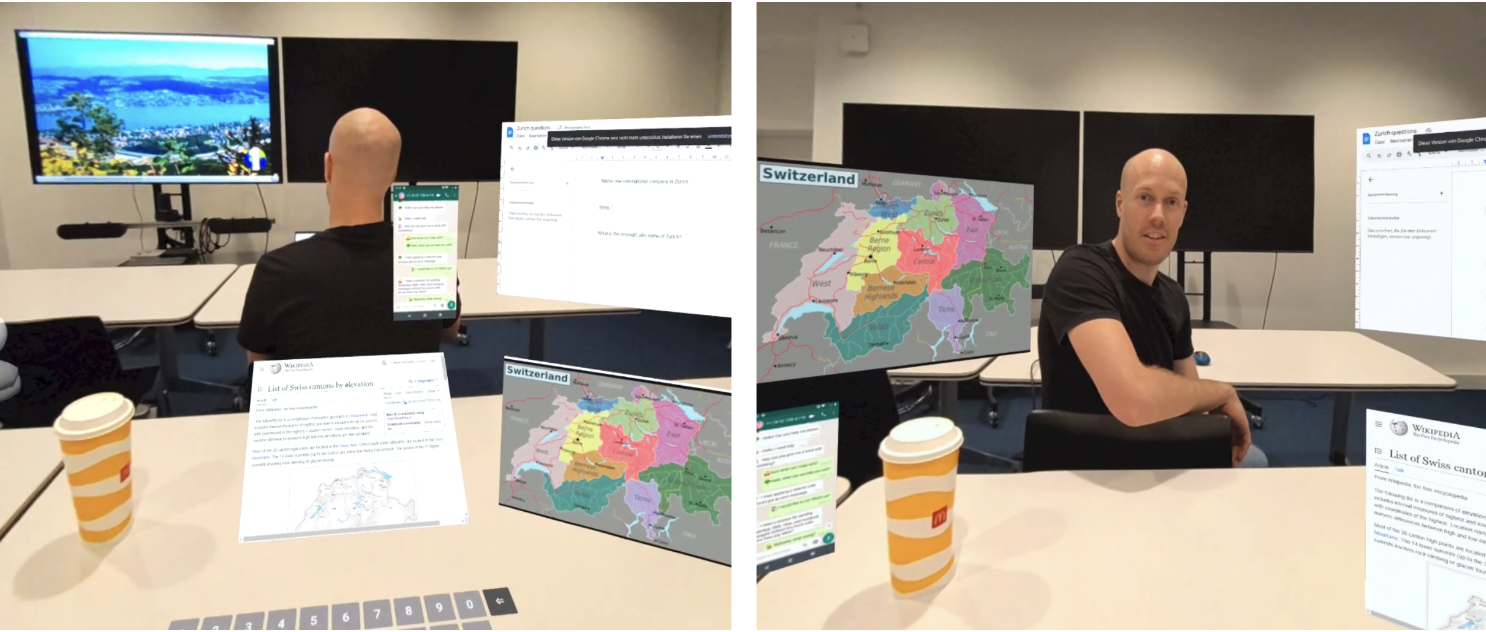

ViGather

Reality Rifts

InfinitePaint

Asynchronous Reality

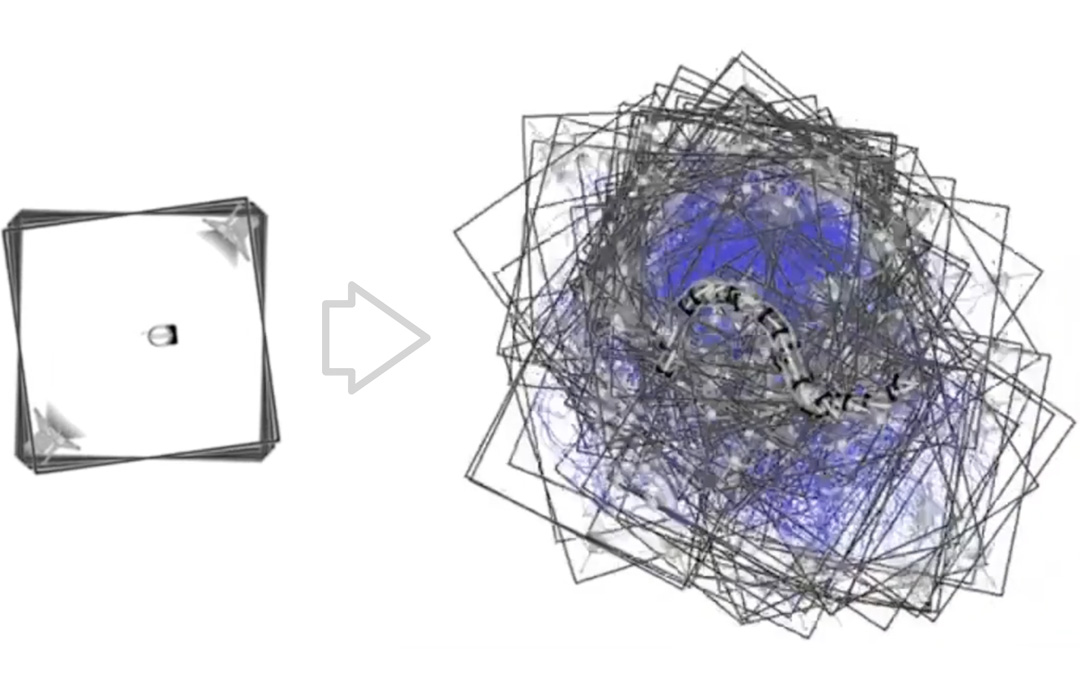

The Chaotic Behavior of Redirection

SoundsRide

AirConstellations

TransforMR

SurfaceFleet

DreamWalker

Mise-Unseen

RealityCheck

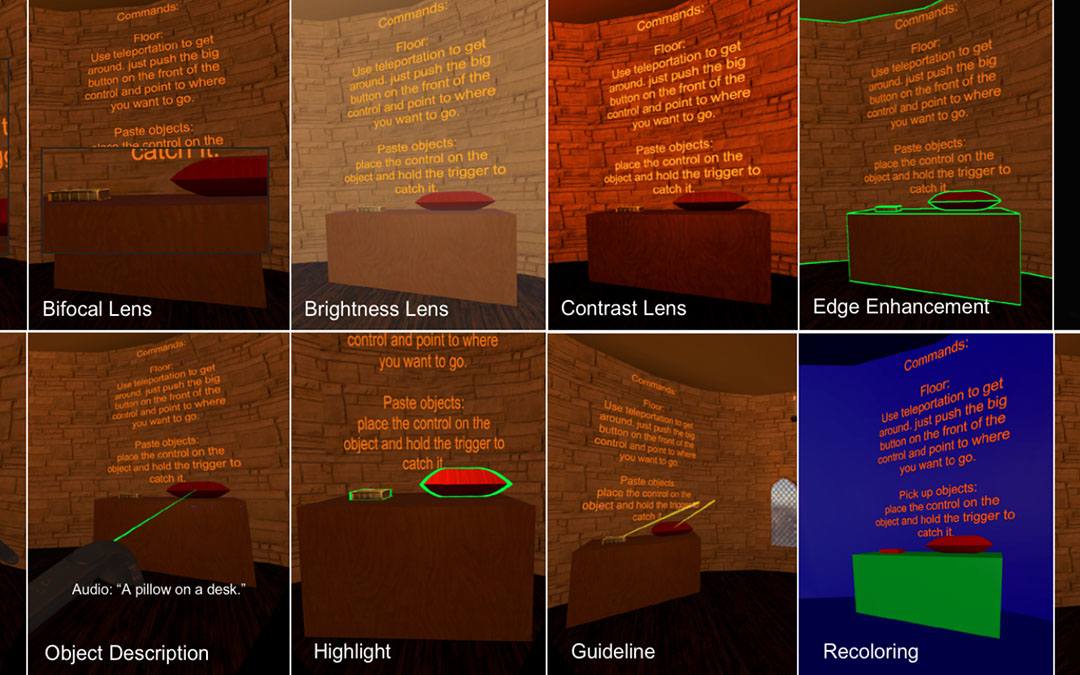

SeeingVR

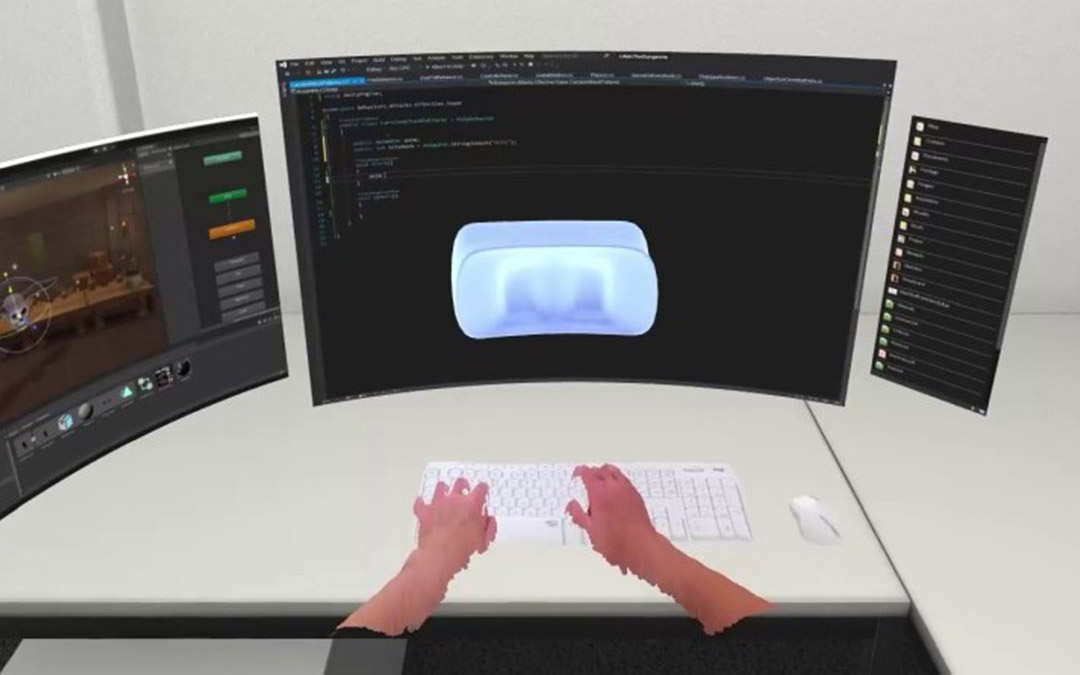

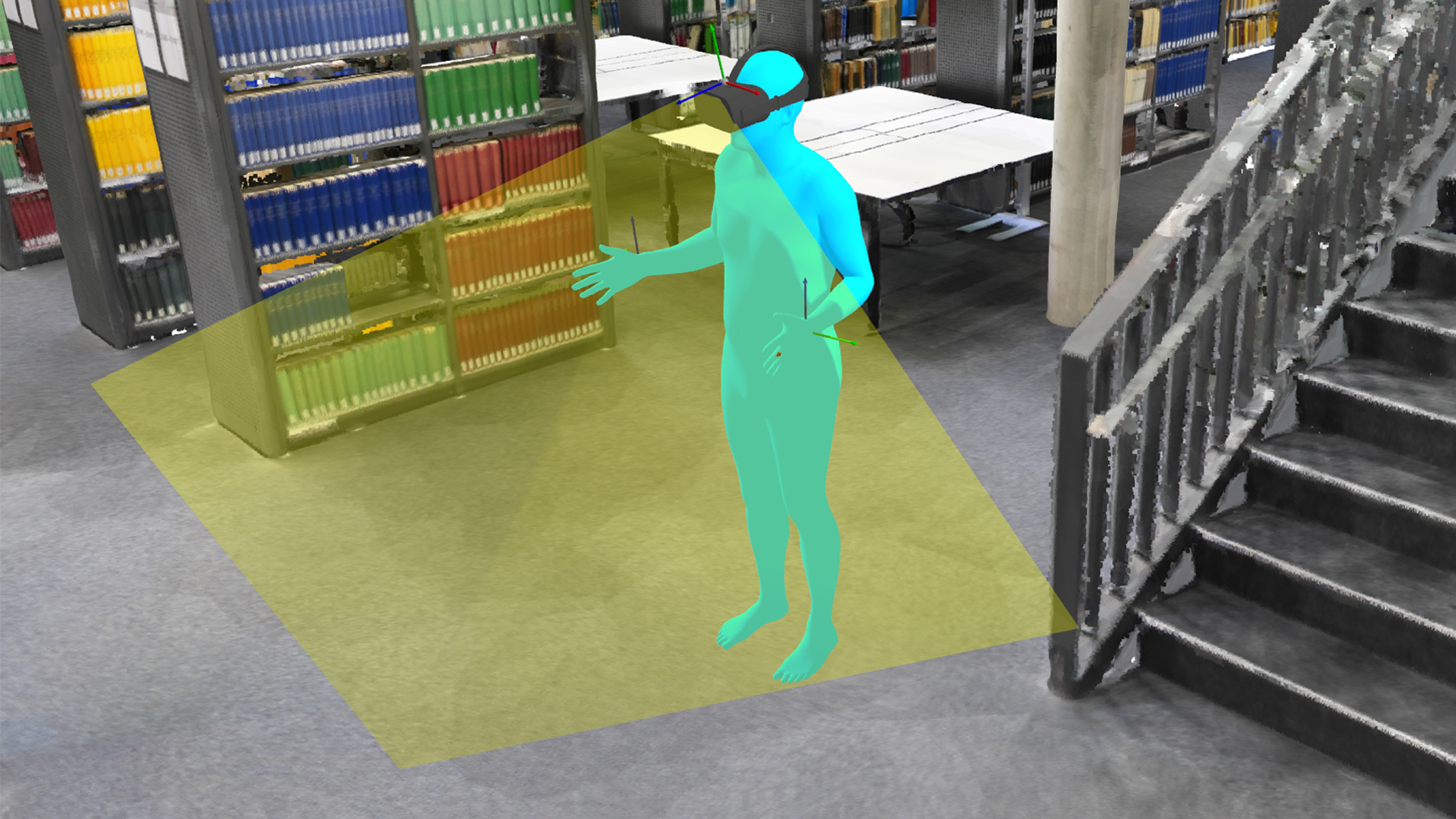

VRoamer

Sparse Haptic Proxy

Imaginary Reality Gaming

Imaginary Devices

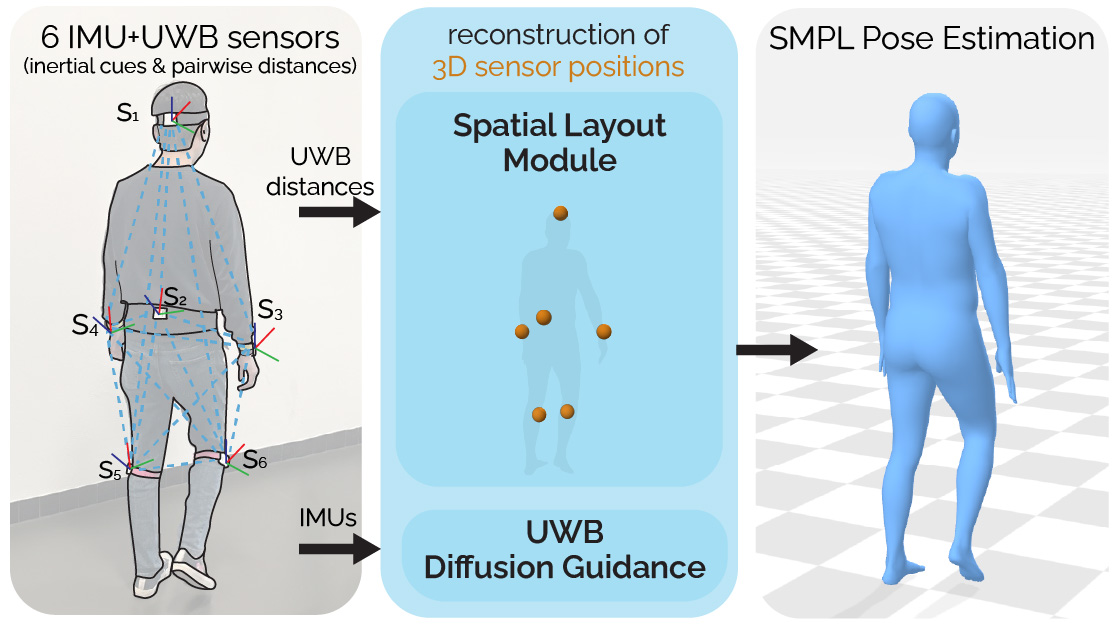

Egocentric perception

Perception from body-worn and first-person sensors for understanding pose, action, and interaction.

Ultra Diffusion Poser

Frequency-Weighted Neural Kalman Filters

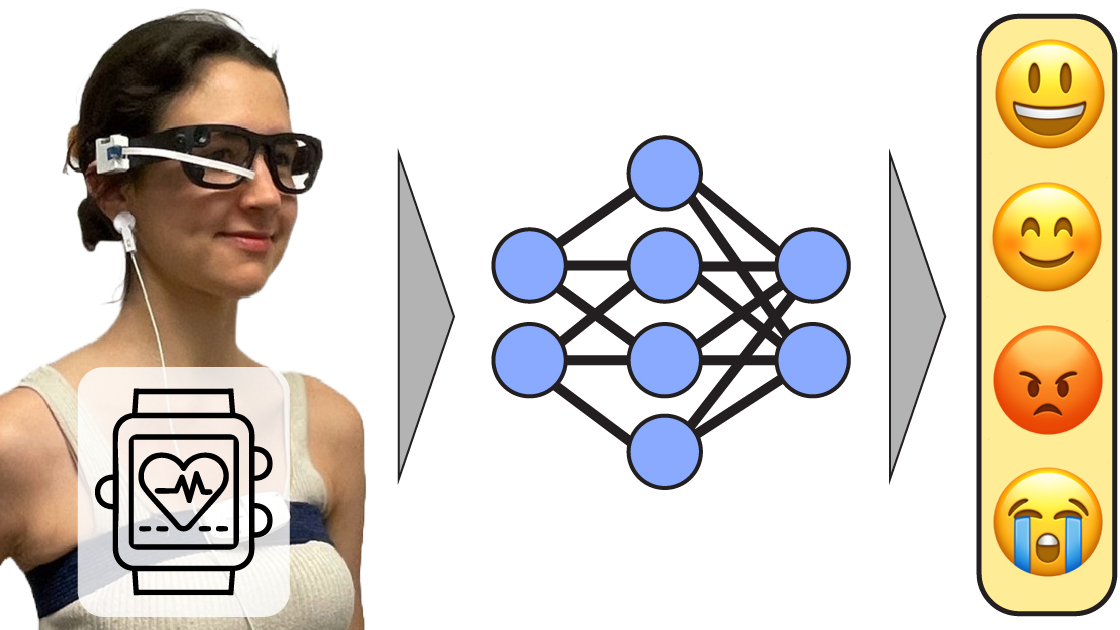

egoEMOTION

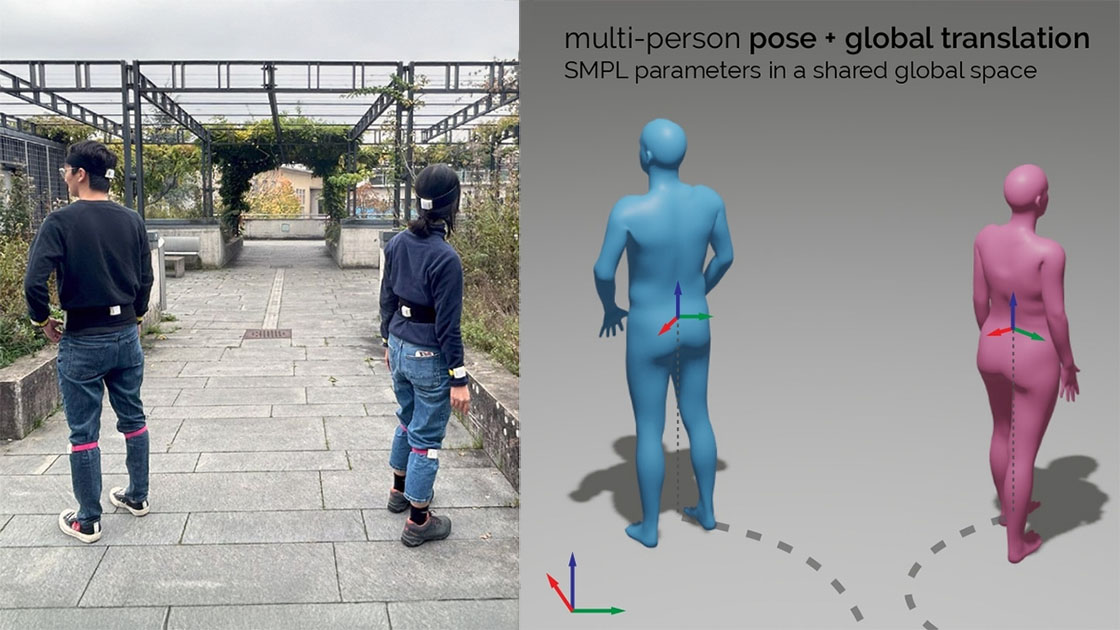

Group Inertial Poser

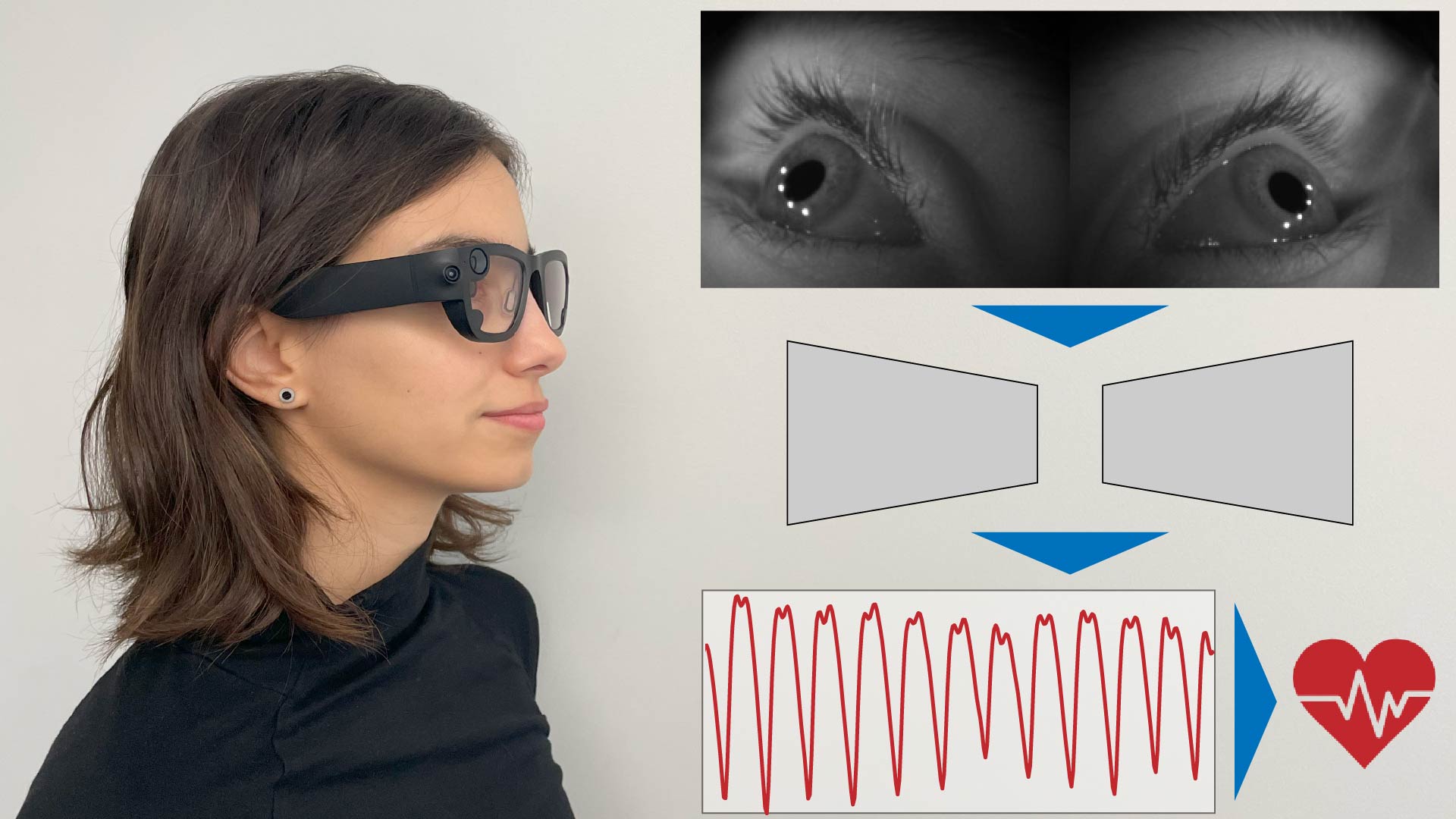

egoPPG

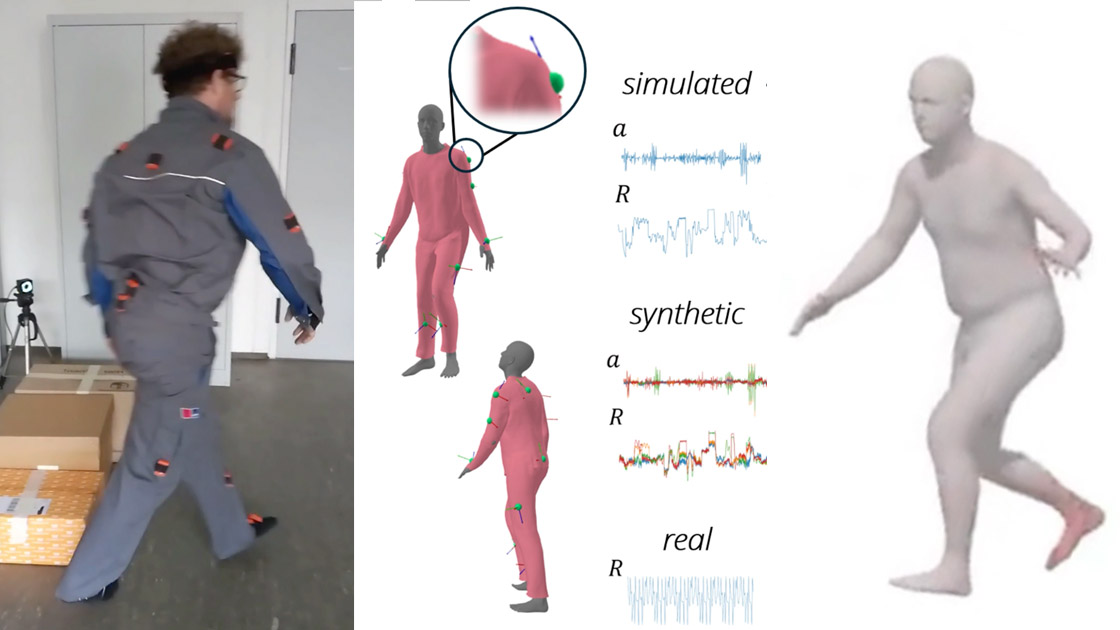

Garment Inertial Poser

EgoPressure

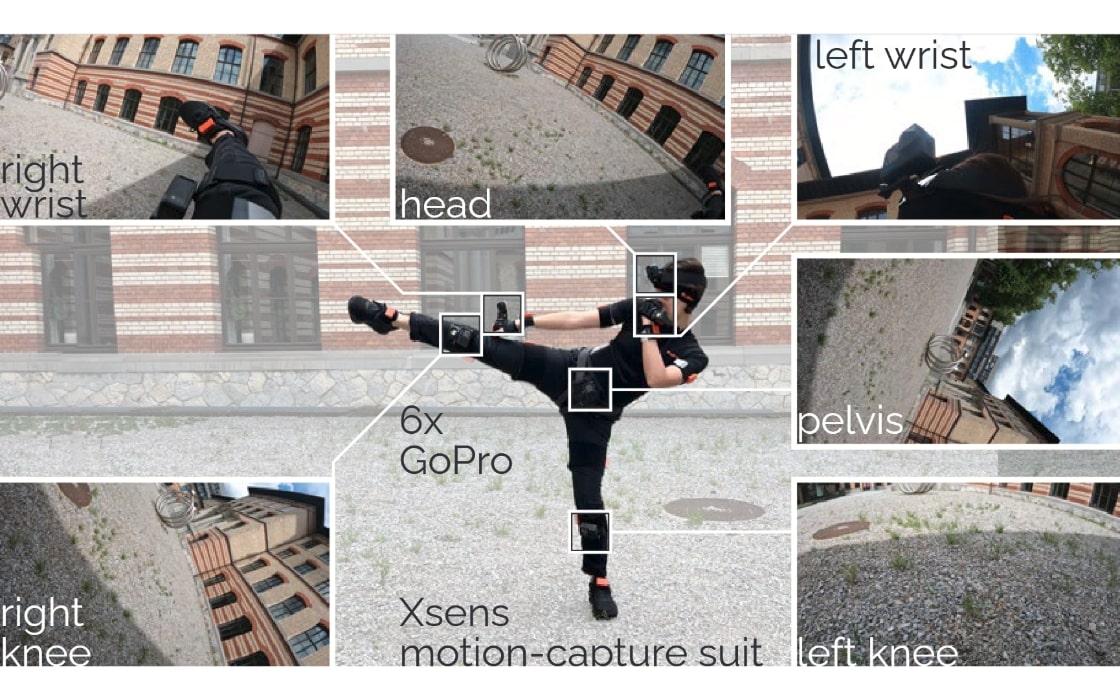

EgoSim

TouchInsight

MANIKIN

EgoPoser

Ultra Inertial Poser

Structured Light Speckle

HOOV

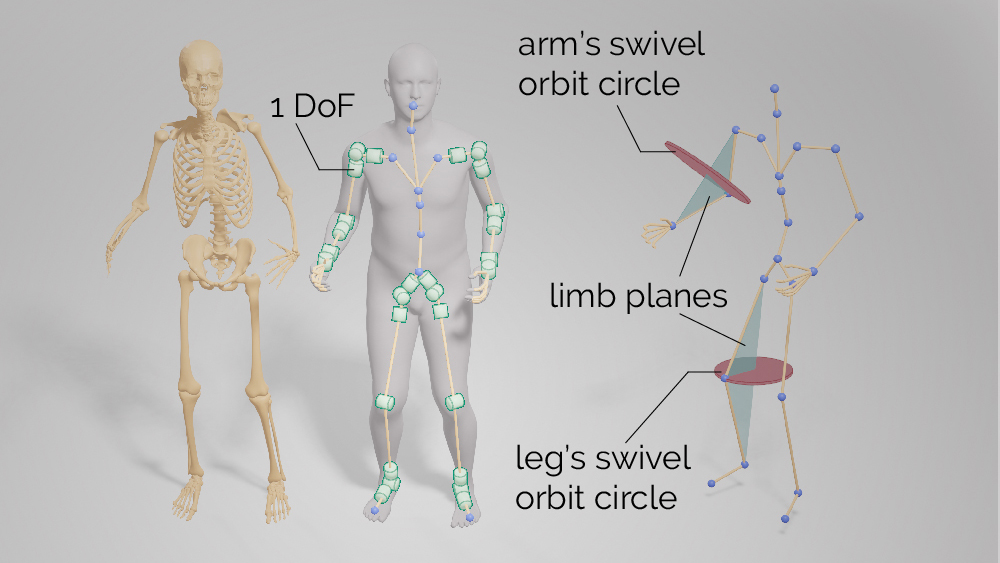

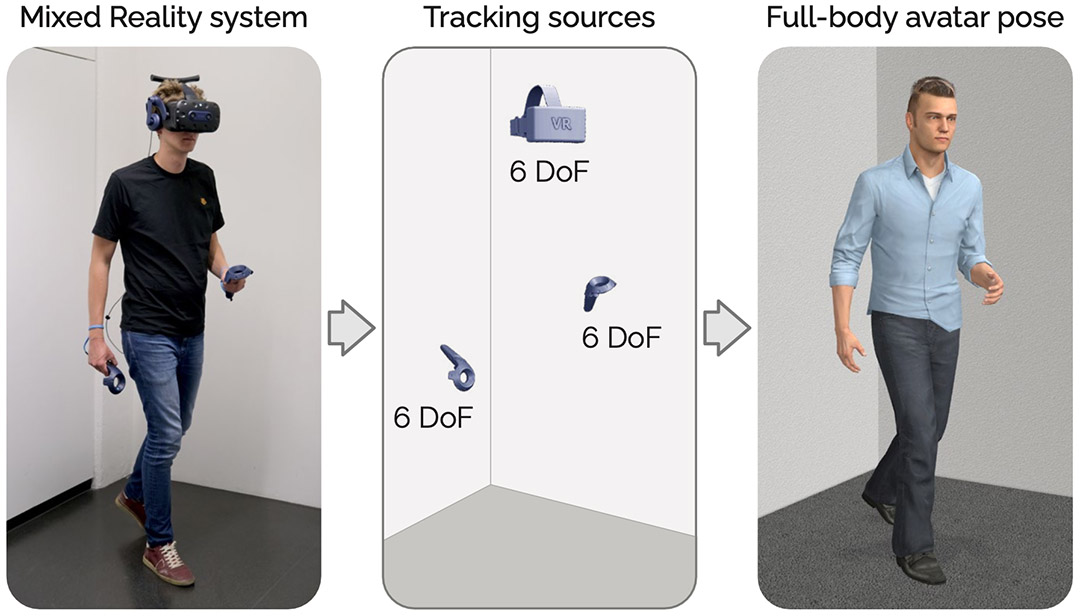

AvatarPoser

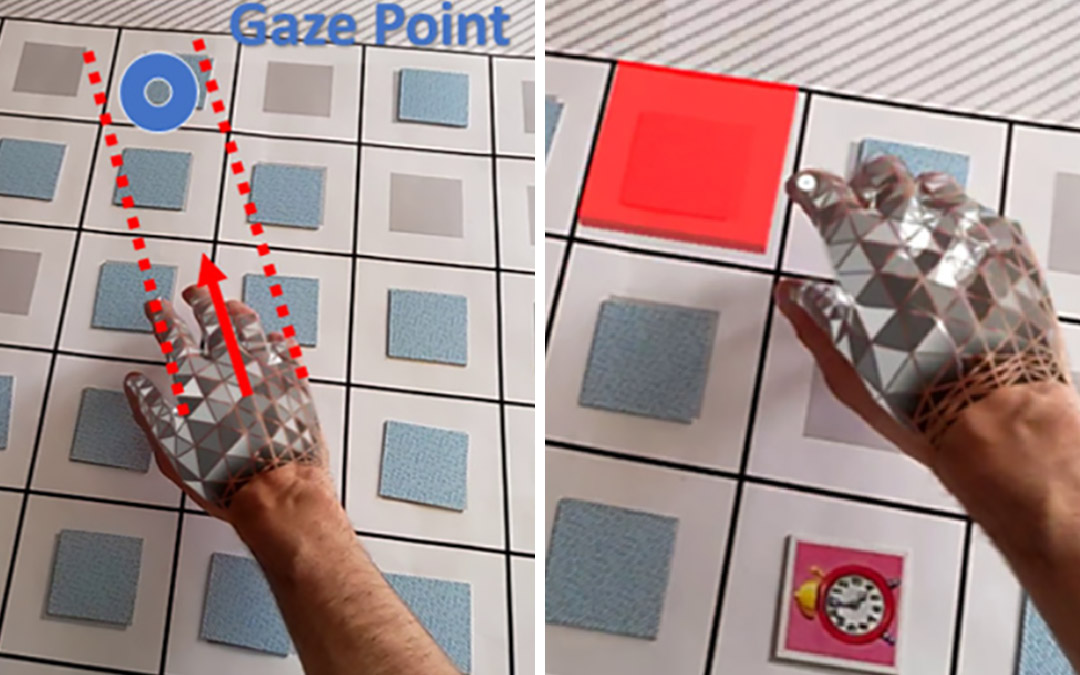

Gaze comes in handy

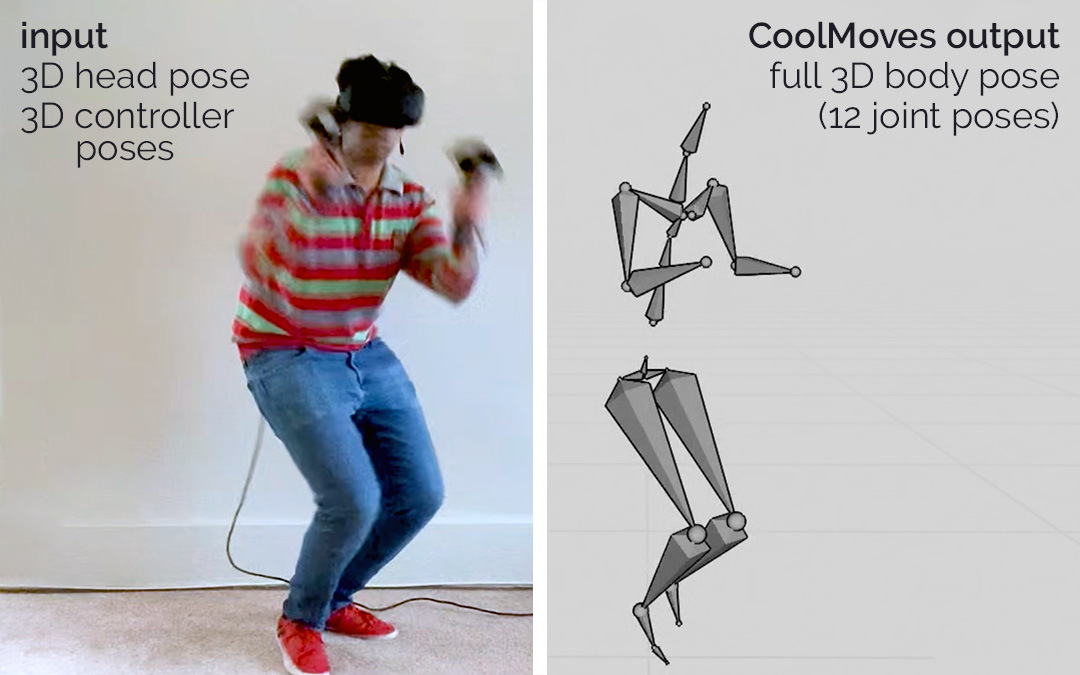

CoolMoves

Privacy-Preserving Ego-Motion Estimation

Imaginary Phone

Input decoding & devices

Sensing hardware, haptics, physical computing, and interaction techniques for expressive input and output.

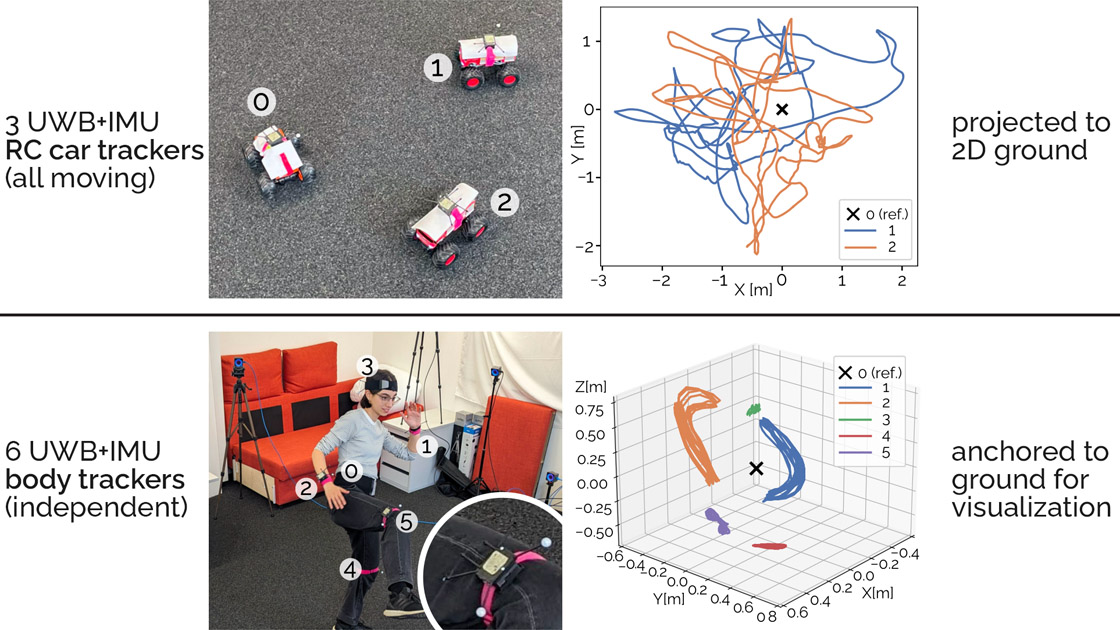

Relative Position Tracking on Moving Nodes without Anchors

HistoLab VR

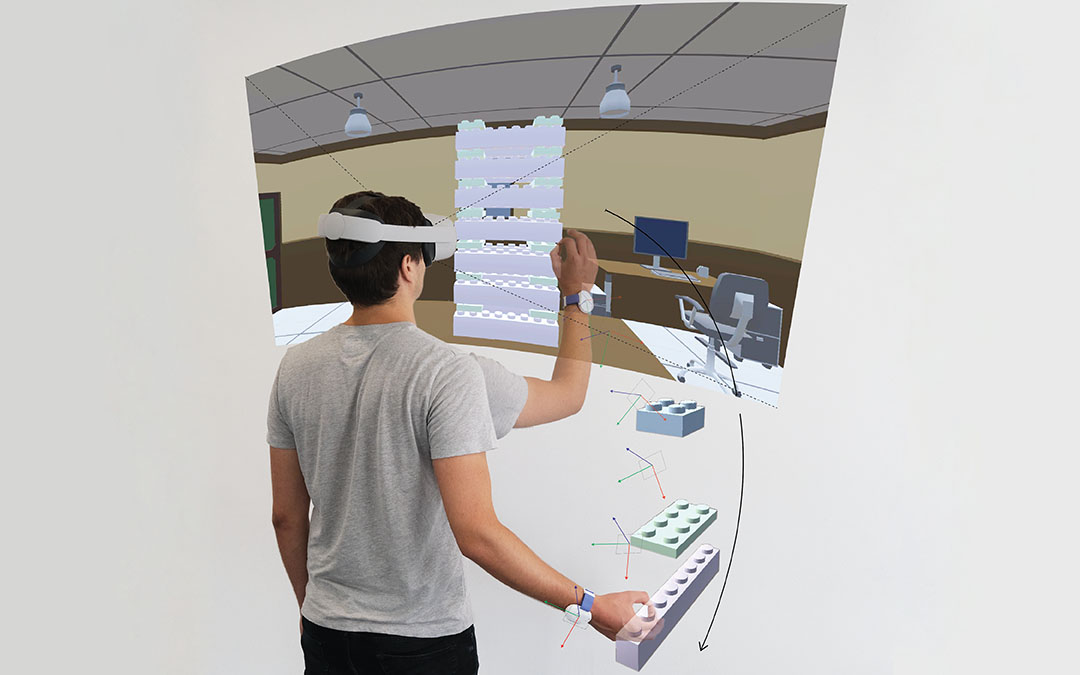

HandyCast

DeltaPen

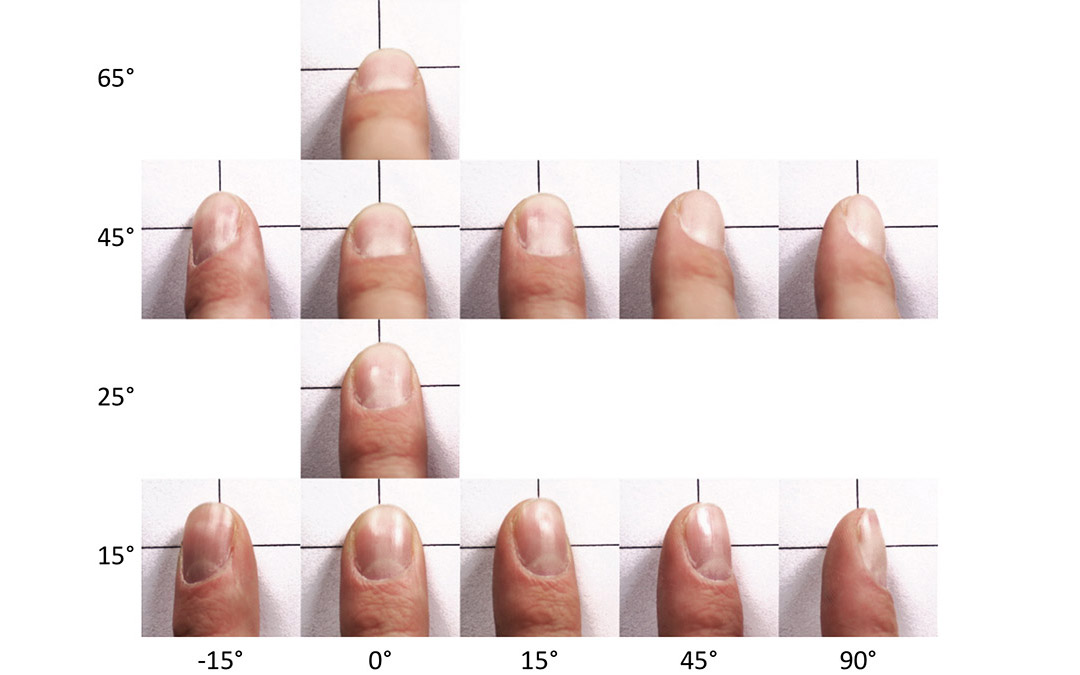

TouchPose

CapContact

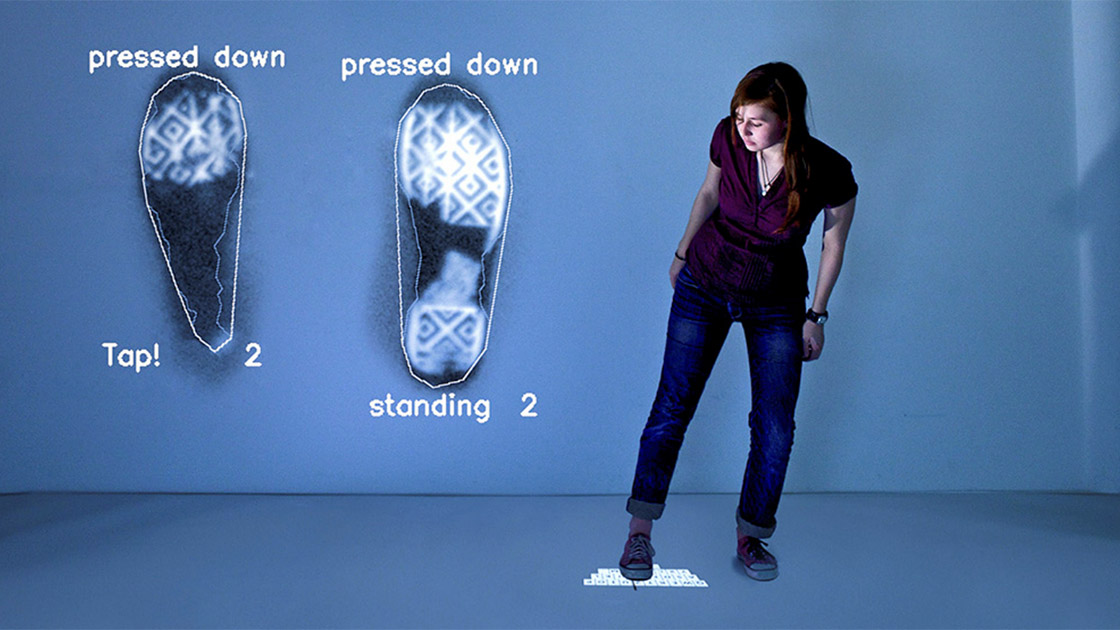

TapID

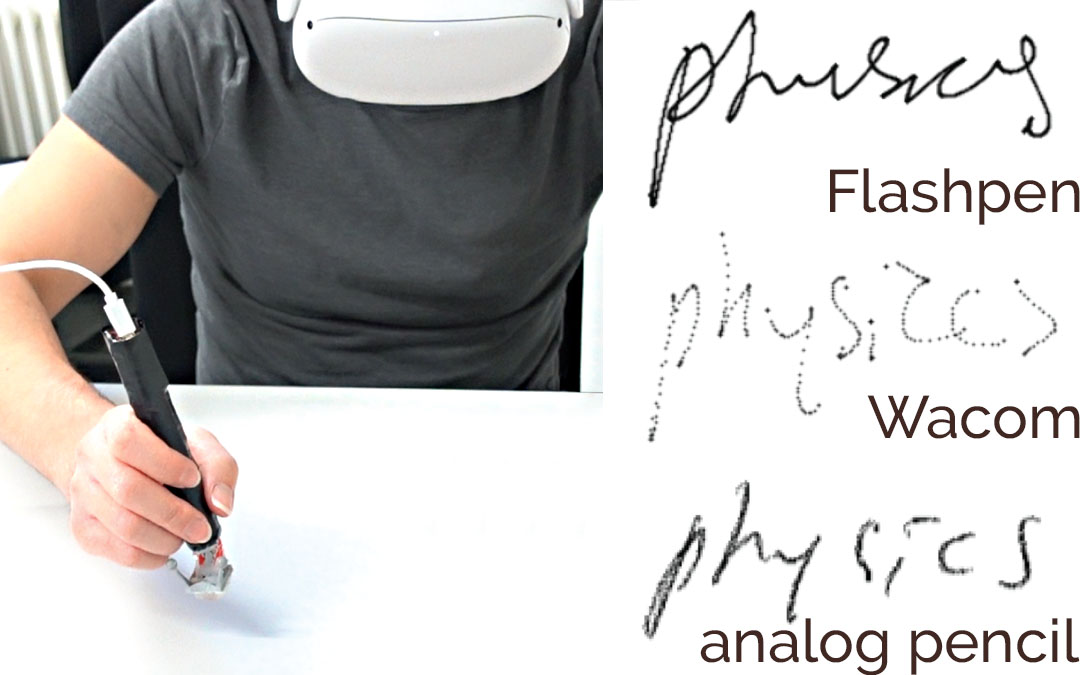

Flashpen

Haptic PIVOT

Omni

Tilt-Responsive Techniques for Digital Drawing Boards

Virtual Reality Without Vision

GazeConduits

CapstanCrunch

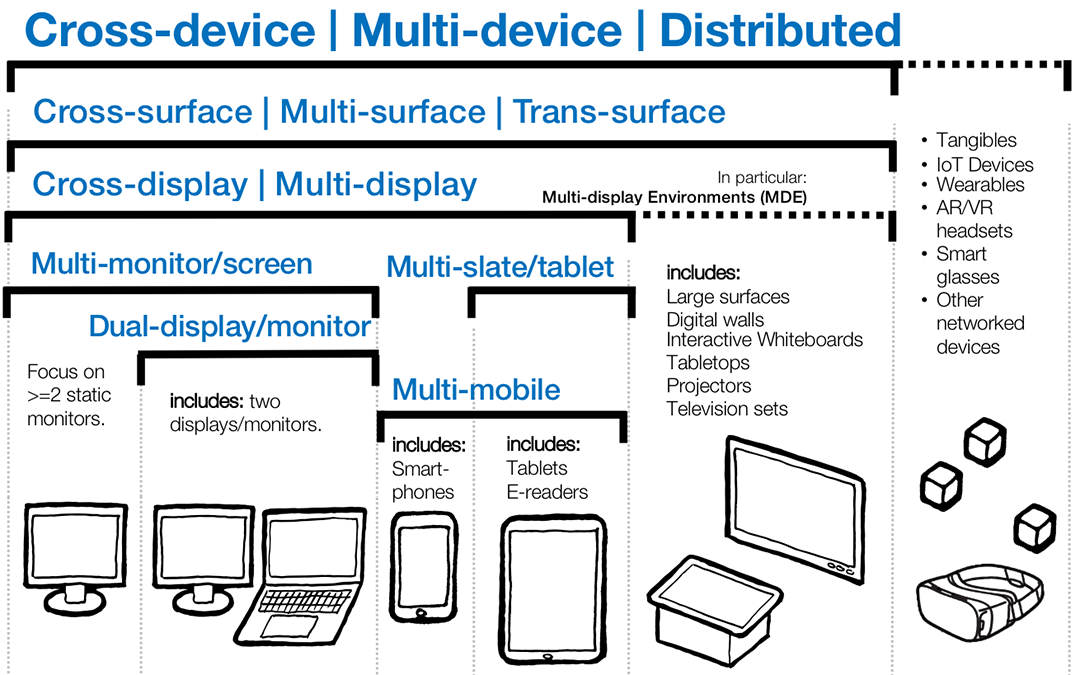

Cross-Device Taxonomy

Sensing Posture-Aware Pen+Touch Interaction on Tablets

TORC

Project Zanzibar

Haptic Revolver

CLAW

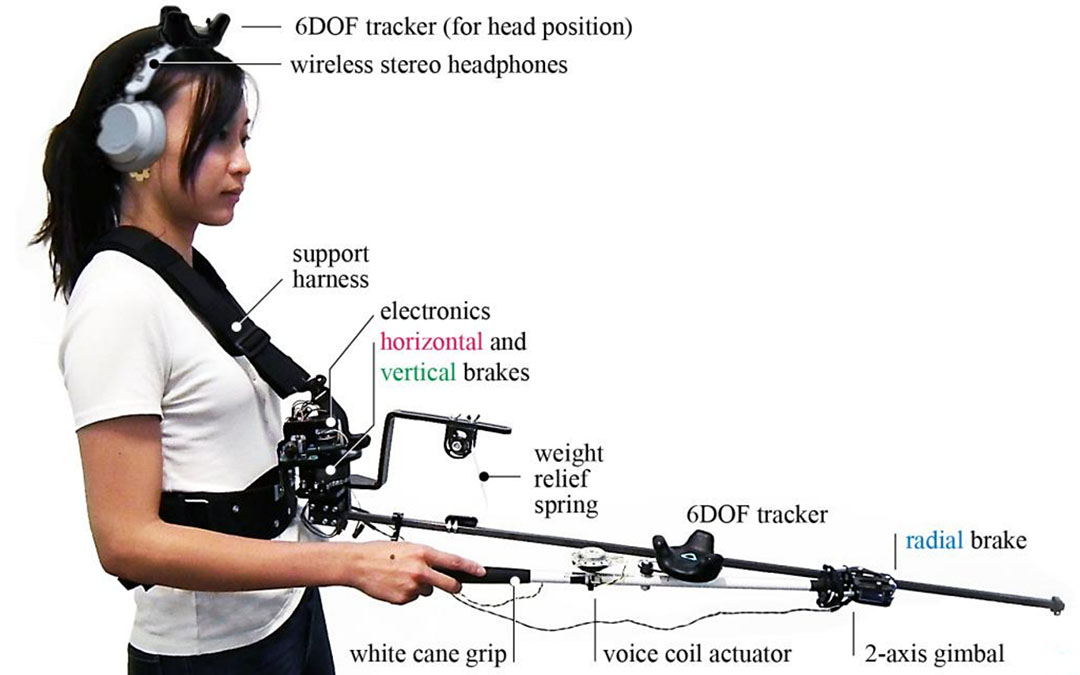

Enabling People with Visual Impairments to Navigate Virtual Reality

Haptic Links

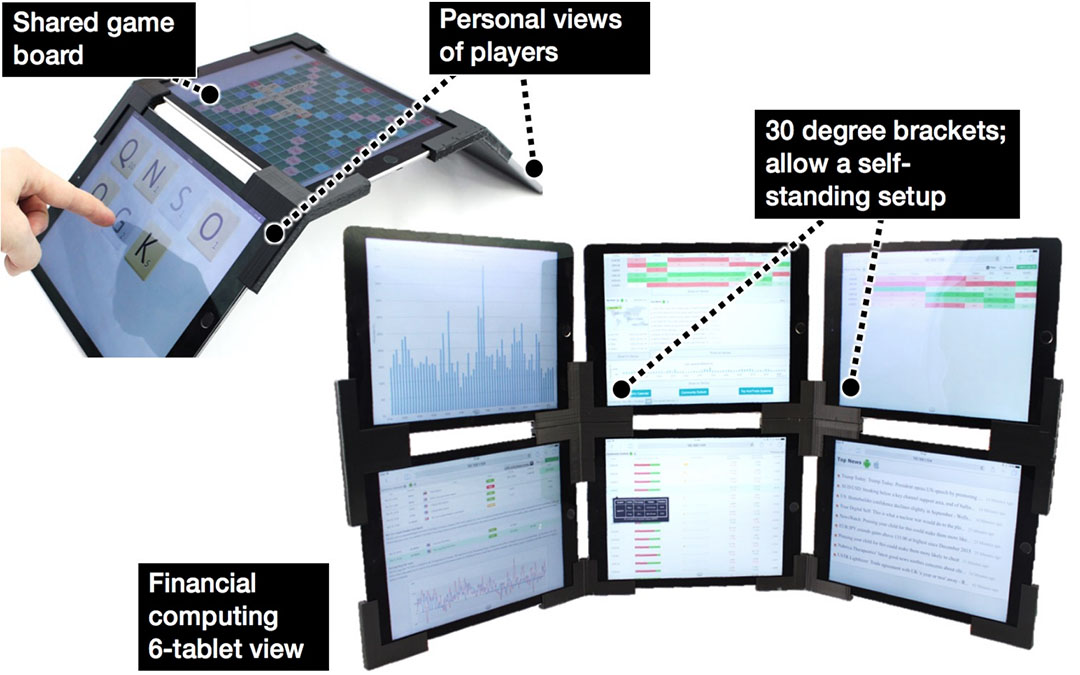

SurfaceConstellations

PolarTrack

Finding Common Ground

NormalTouch and TextureTouch

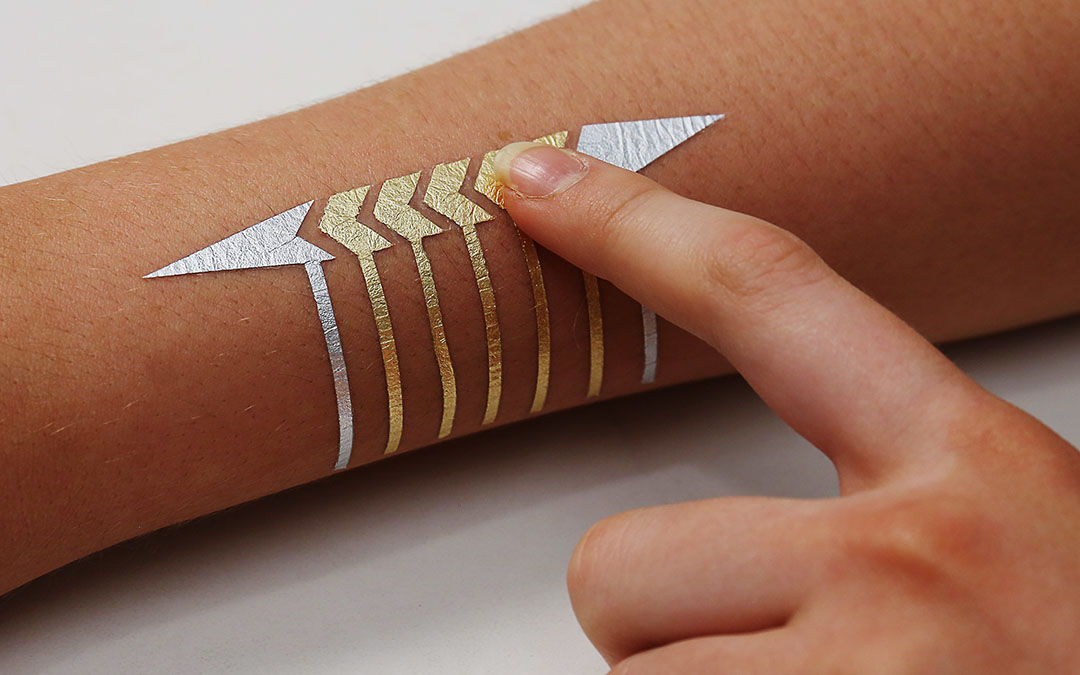

DuoSkin

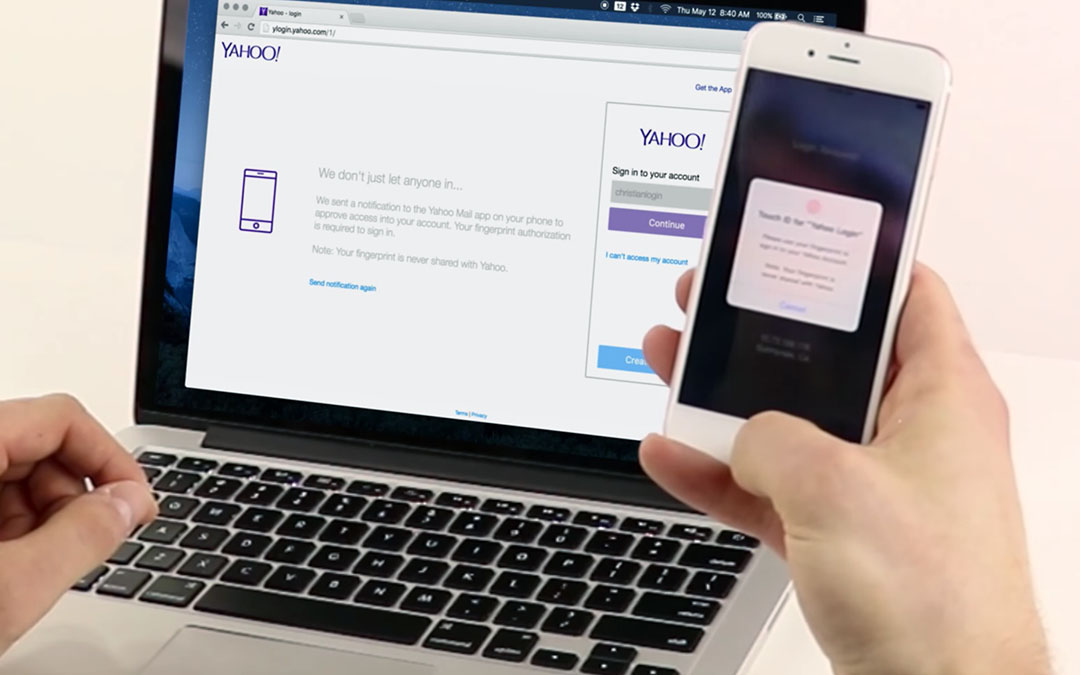

On-Demand Biometrics

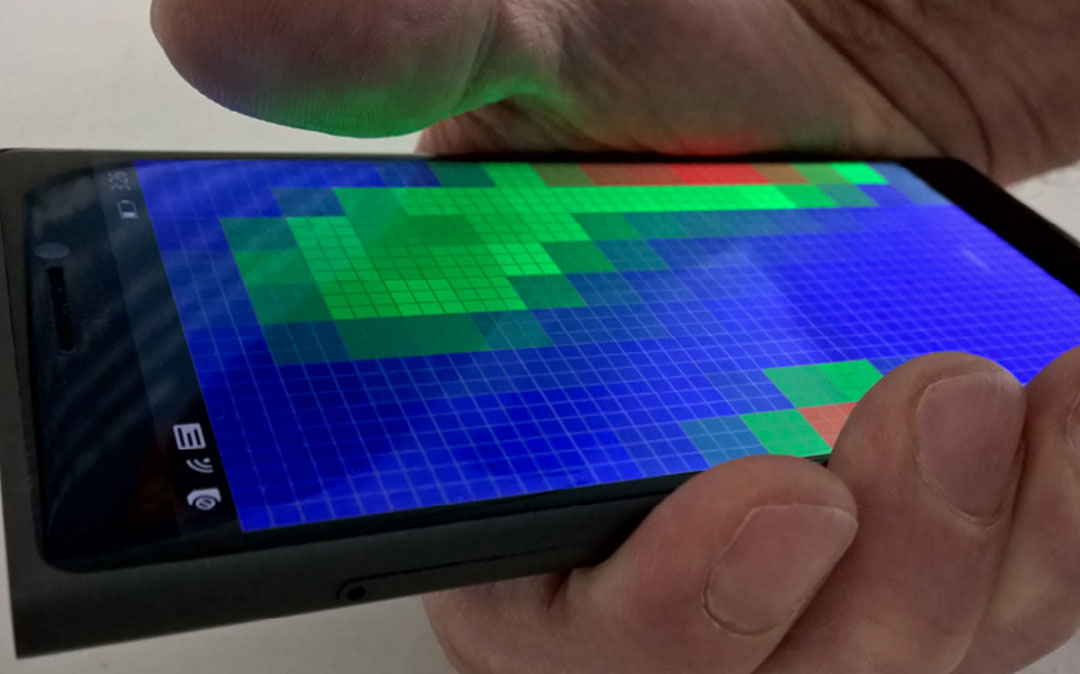

Pre-Touch Sensing for Mobile Interaction

Tracko

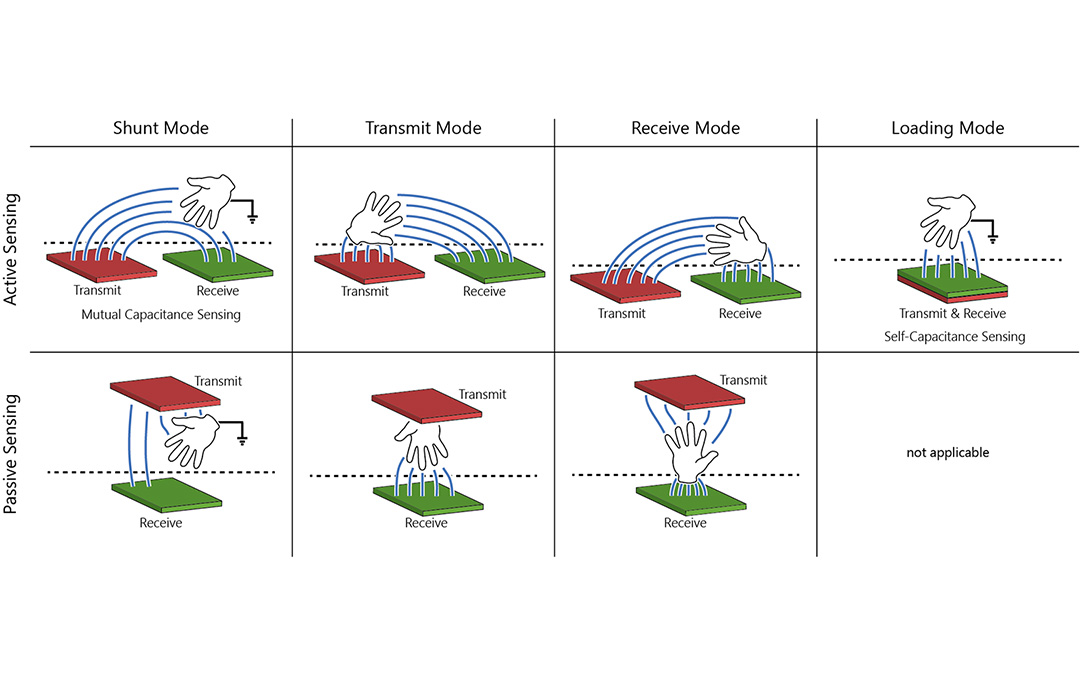

Biometric Touch Sensing

Second Screen

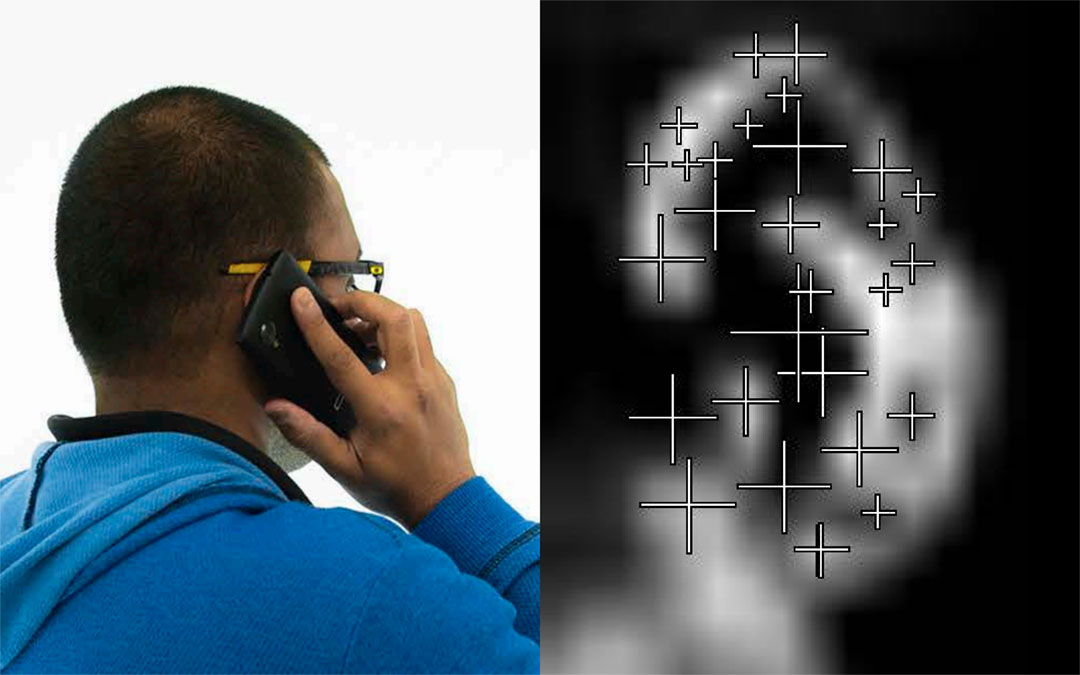

Bodyprint

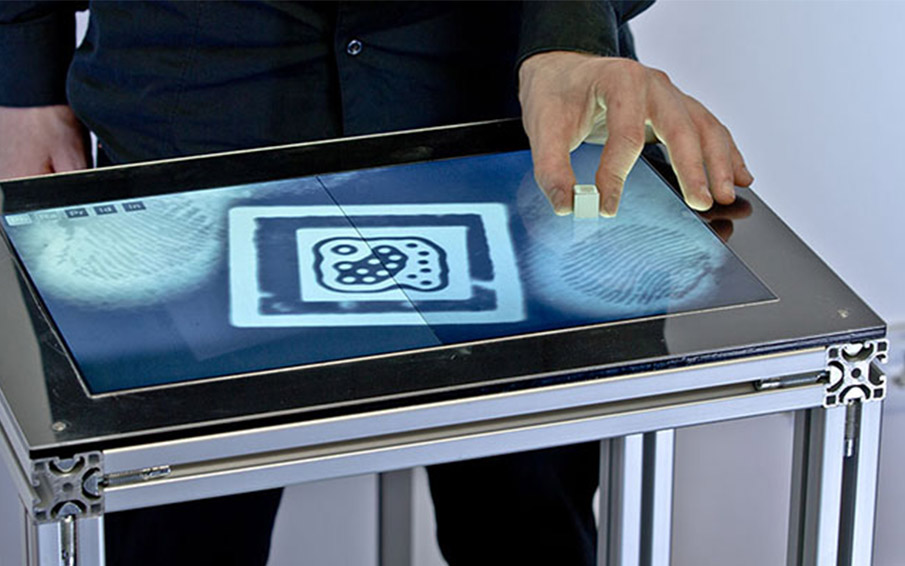

Fiberio

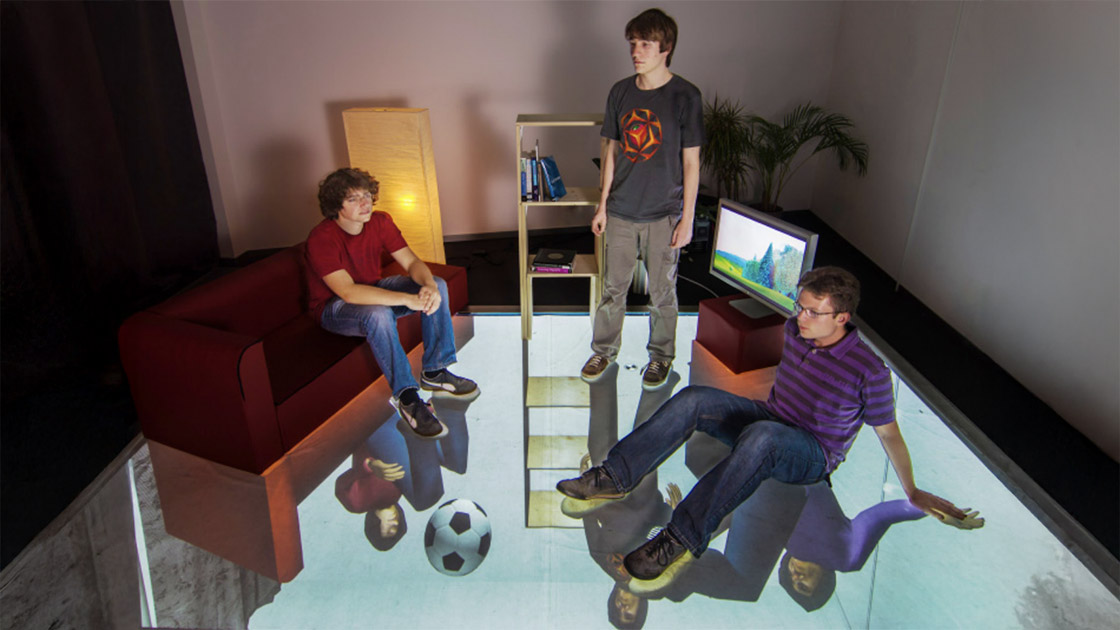

GravitySpace

Bootstrapper

Implanted User Interfaces

Data Miming

Multitoe

The Generalized Perceived Input Point Model

Relaxed Selection Techniques

User modeling

Models of human behavior, preference, ability, and performance used to personalize and optimize interfaces.

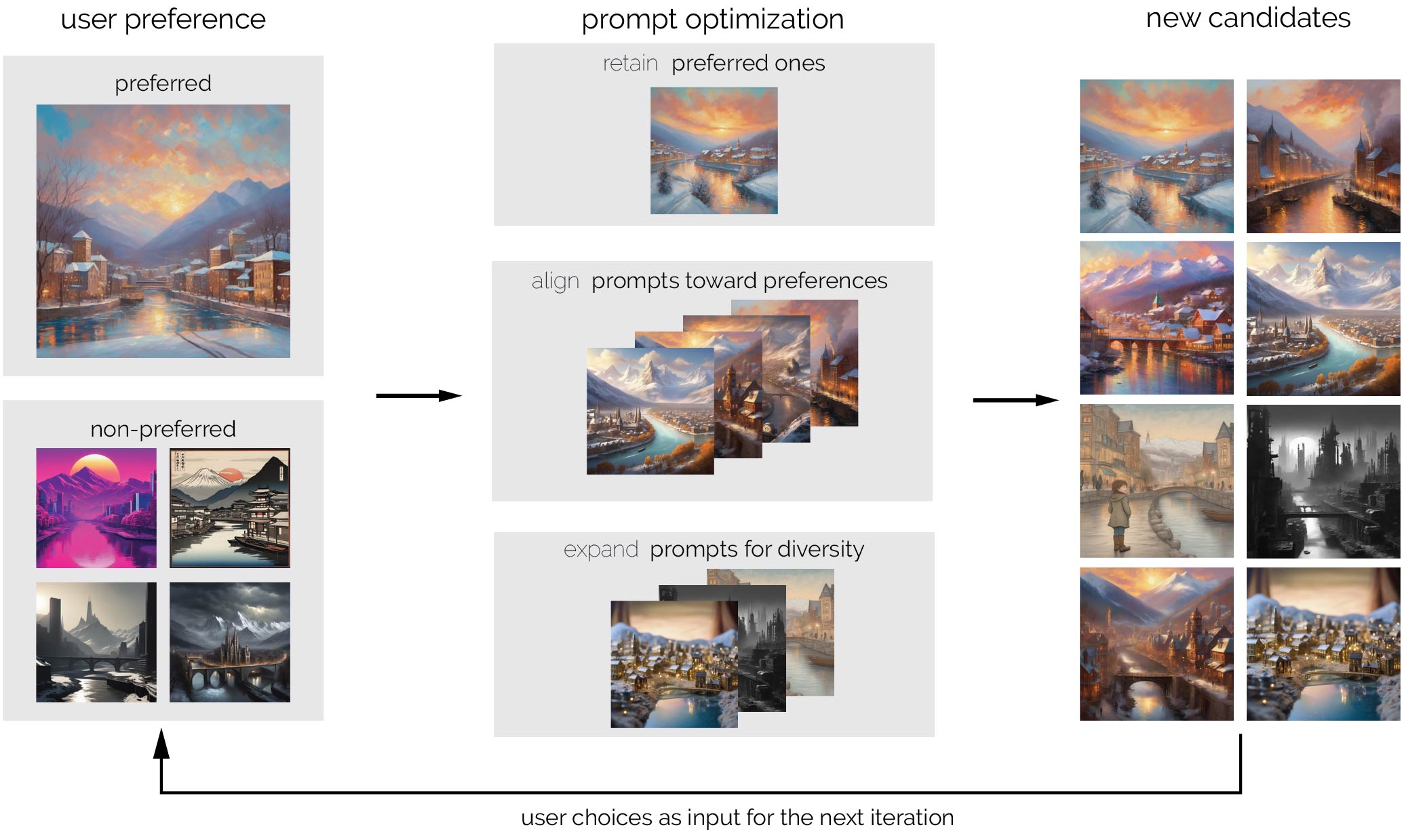

Preference-Guided Prompt Optimization

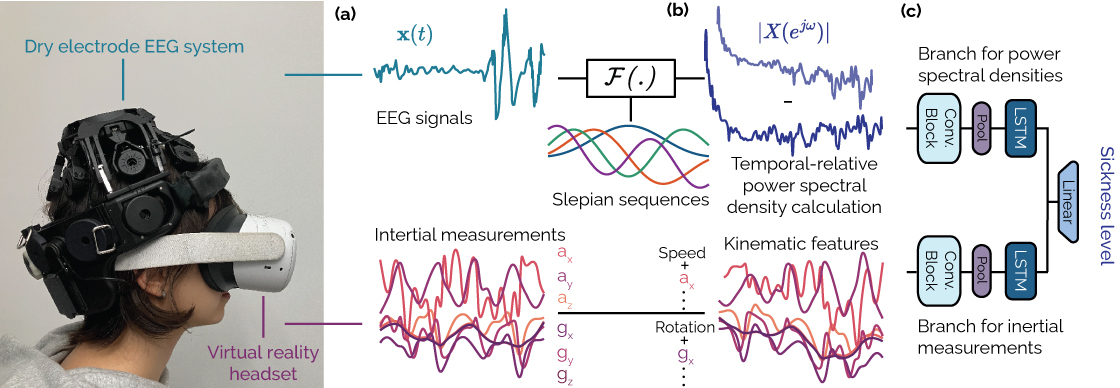

Cybersickness Detection Beyond Subjectivity

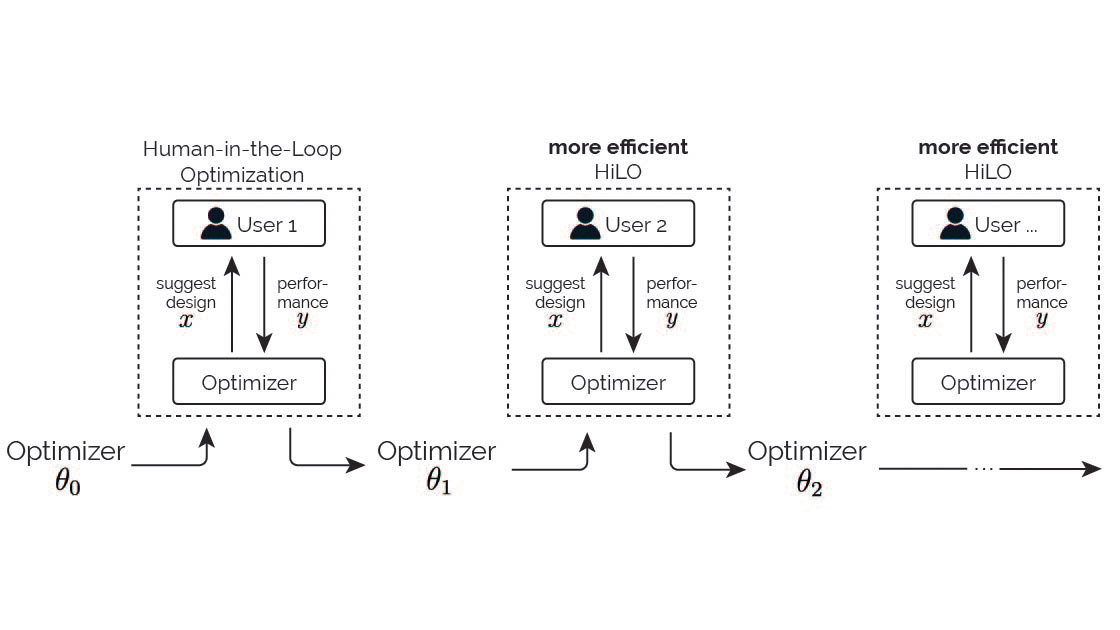

Continual Human-in-the-Loop Optimization

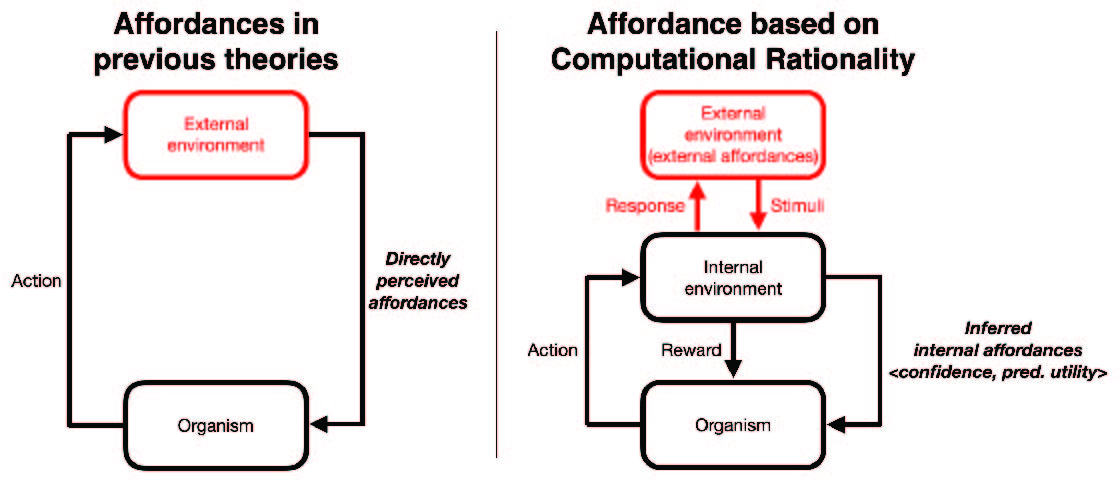

Redefining Affordance

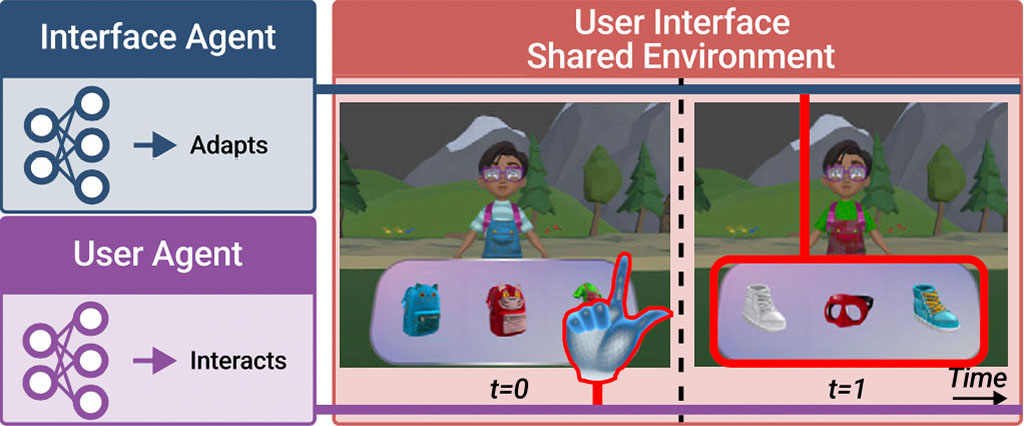

MARLUI

Synchronous vs. Asynchronous Reality

Controllers or Bare Hands?

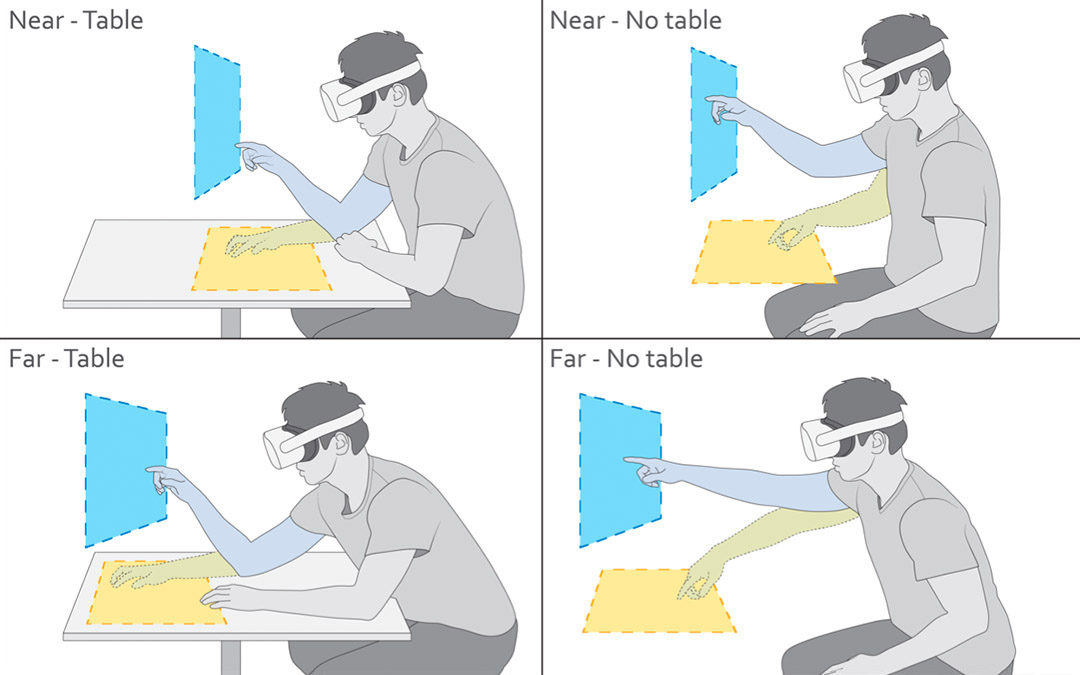

ComforTable User Interfaces

Demographic & Behavioral Correlates of Cybersickness

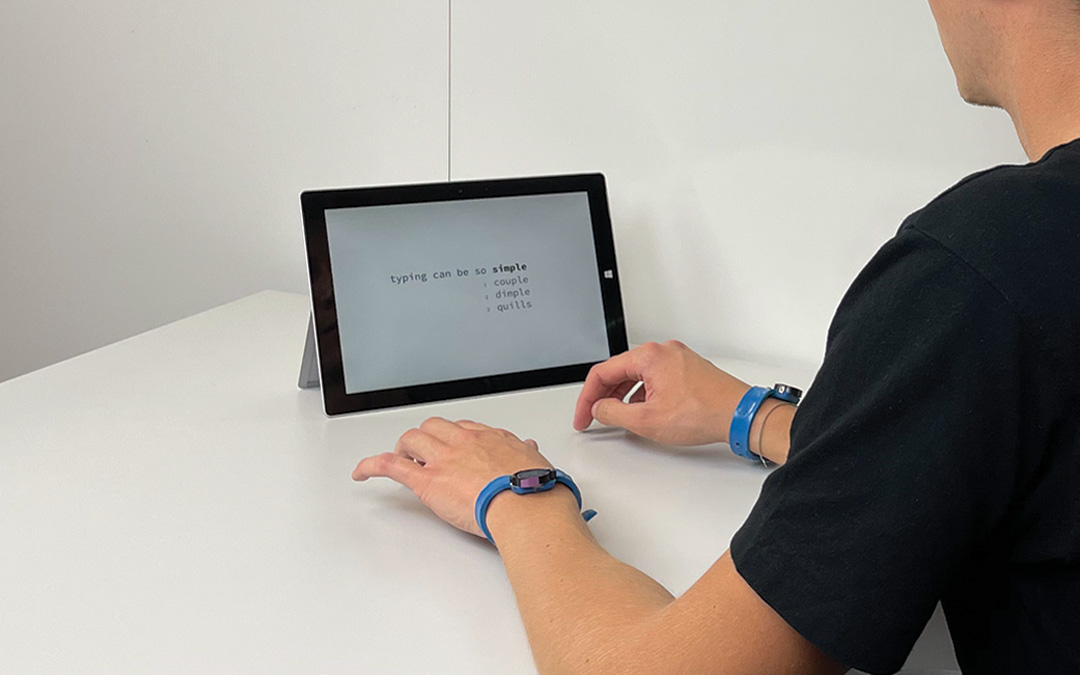

TapType

Understanding Multi-Device Usage Patterns

Understanding Touch

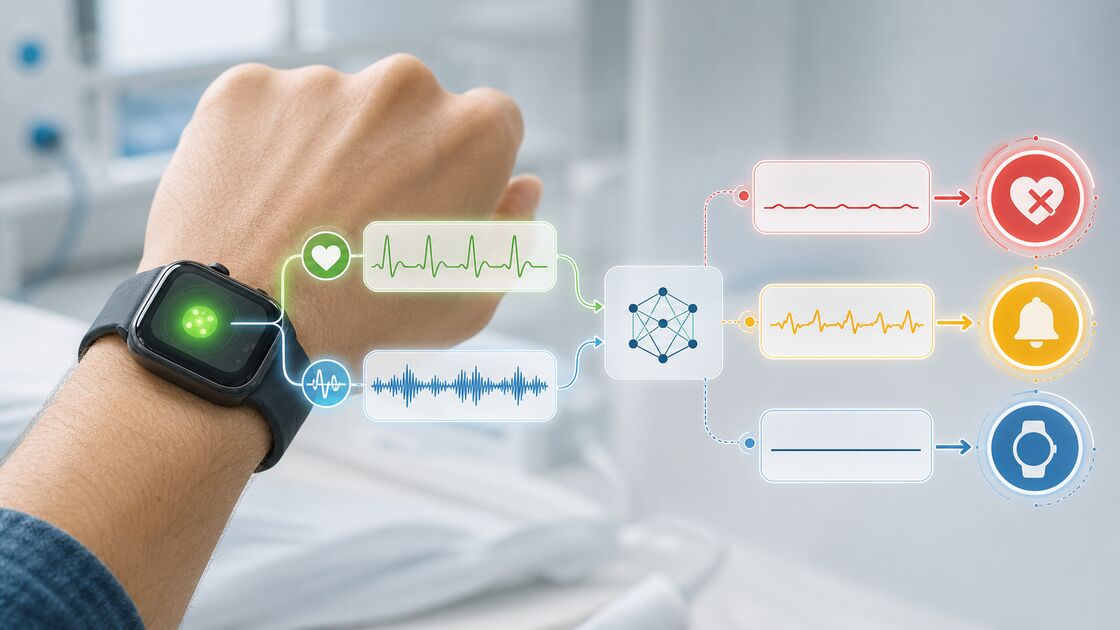

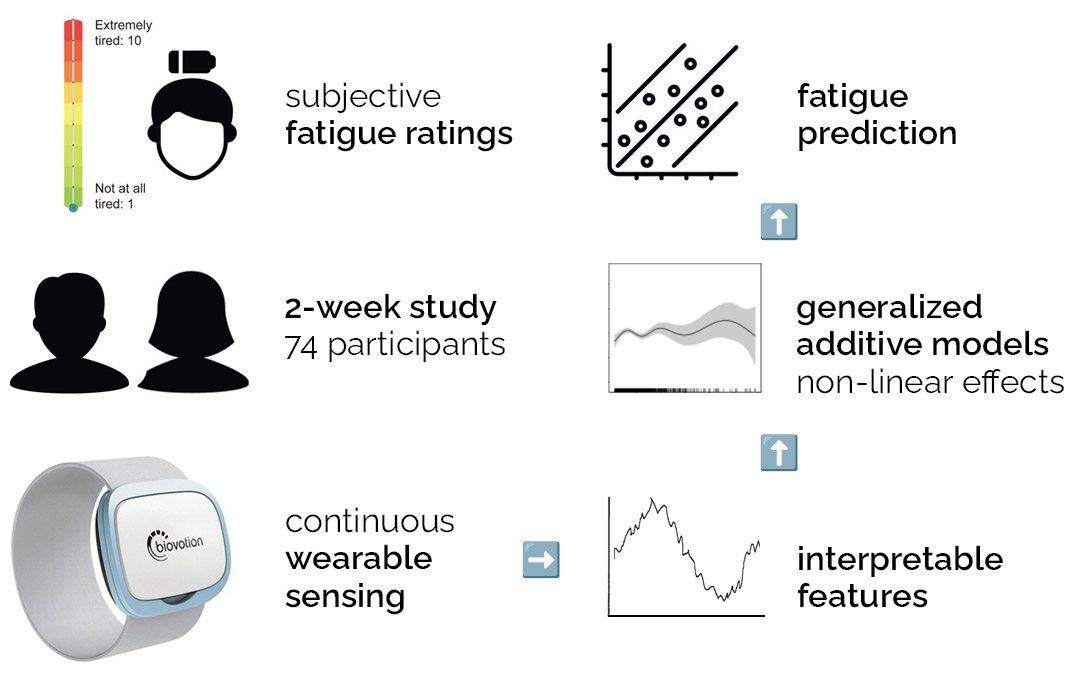

Digital biomarkers

Mobile and wearable sensing methods for measuring health, sleep, fatigue, physiology, and affective state.

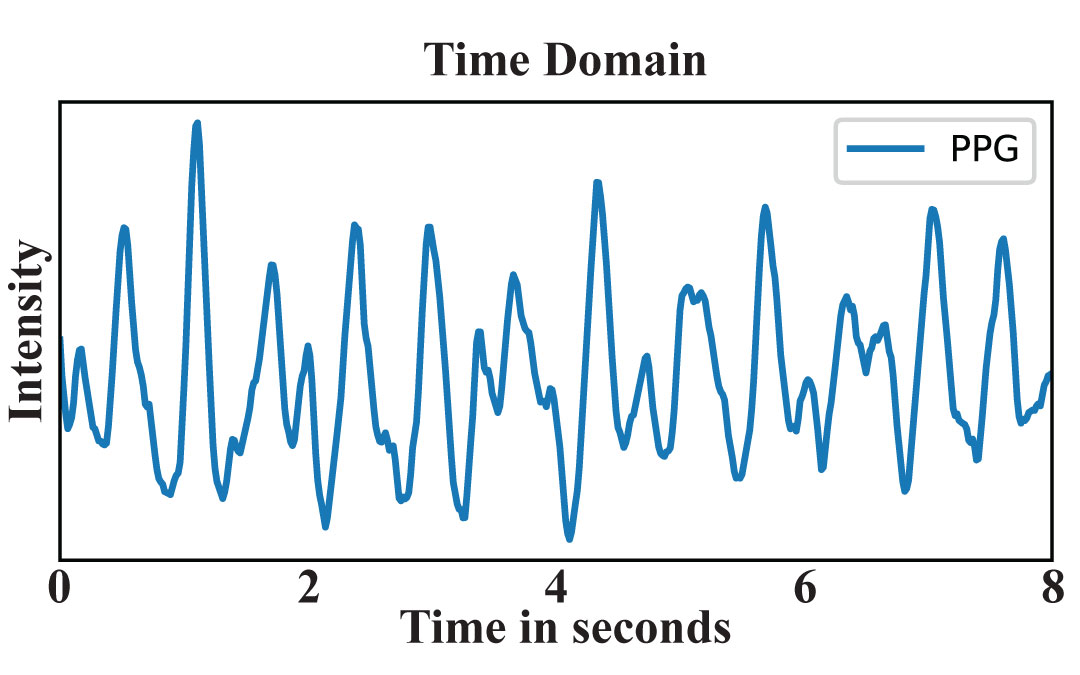

Heart Rate Variability Estimation

Loss of Pulse Detection

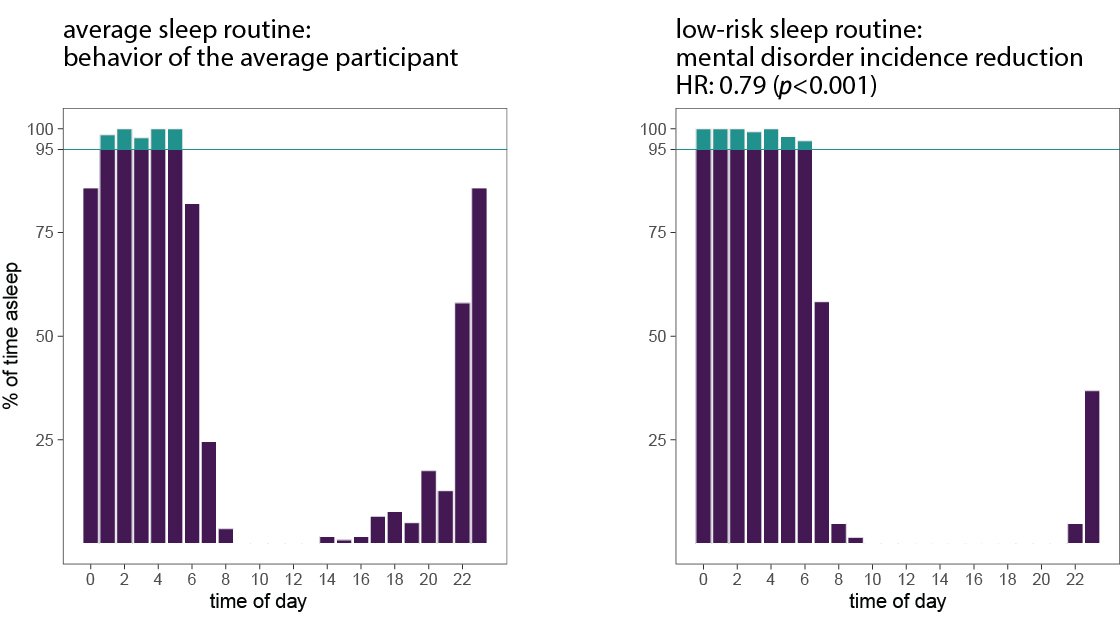

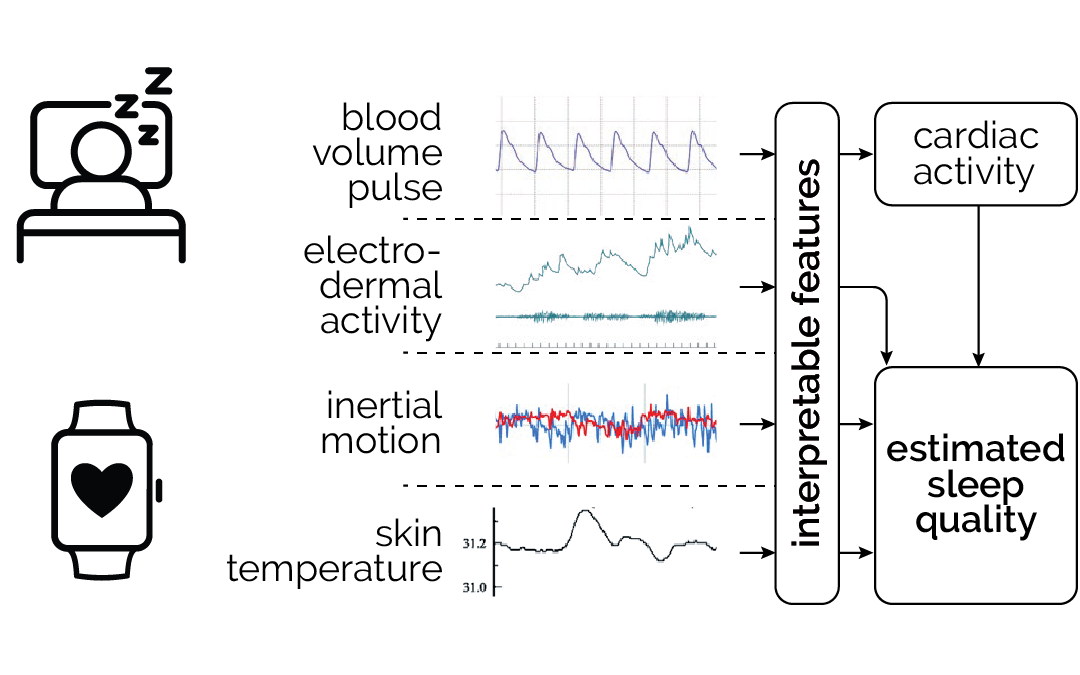

Beyond the hours slept

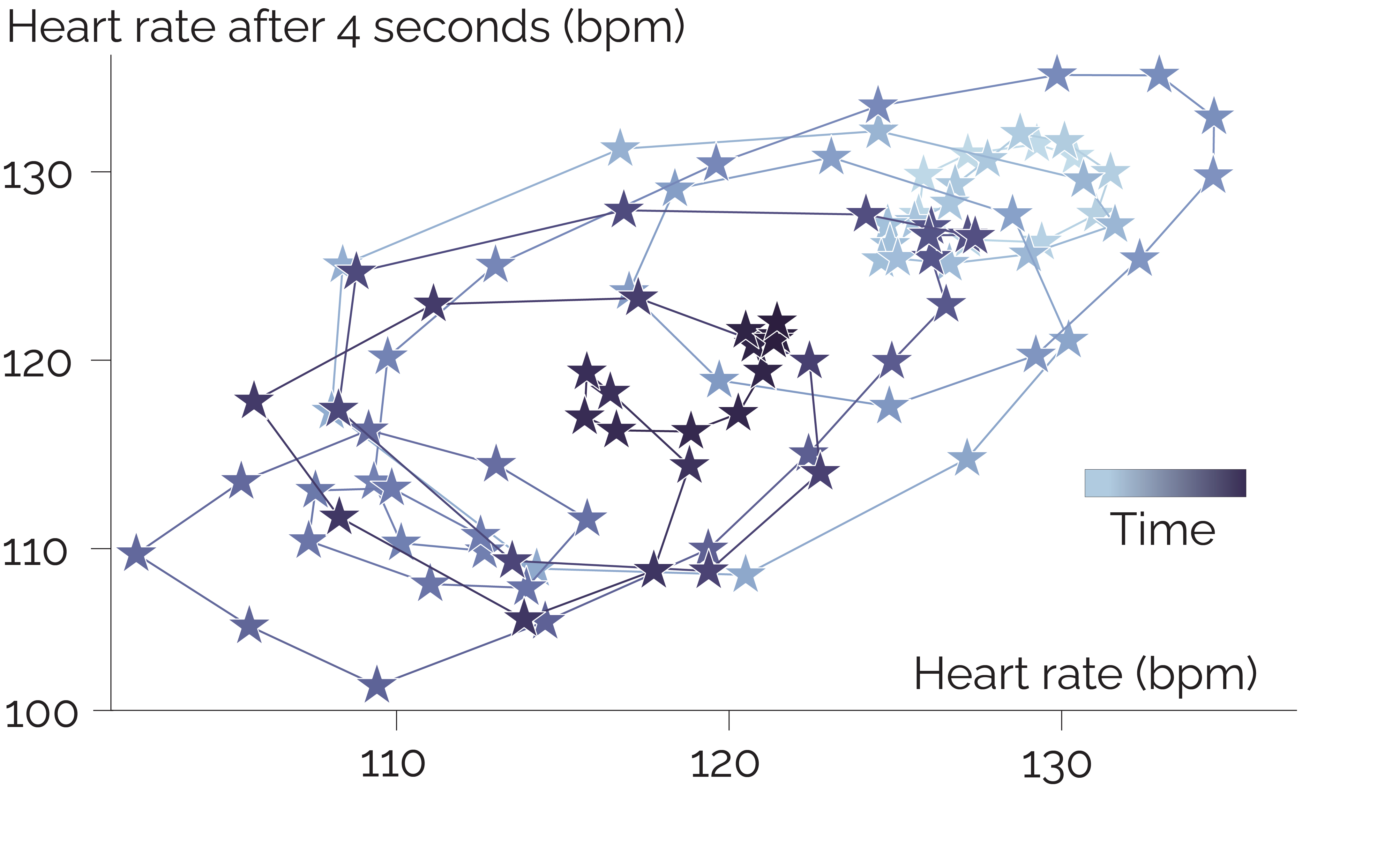

Learning Heart Rate Patterns

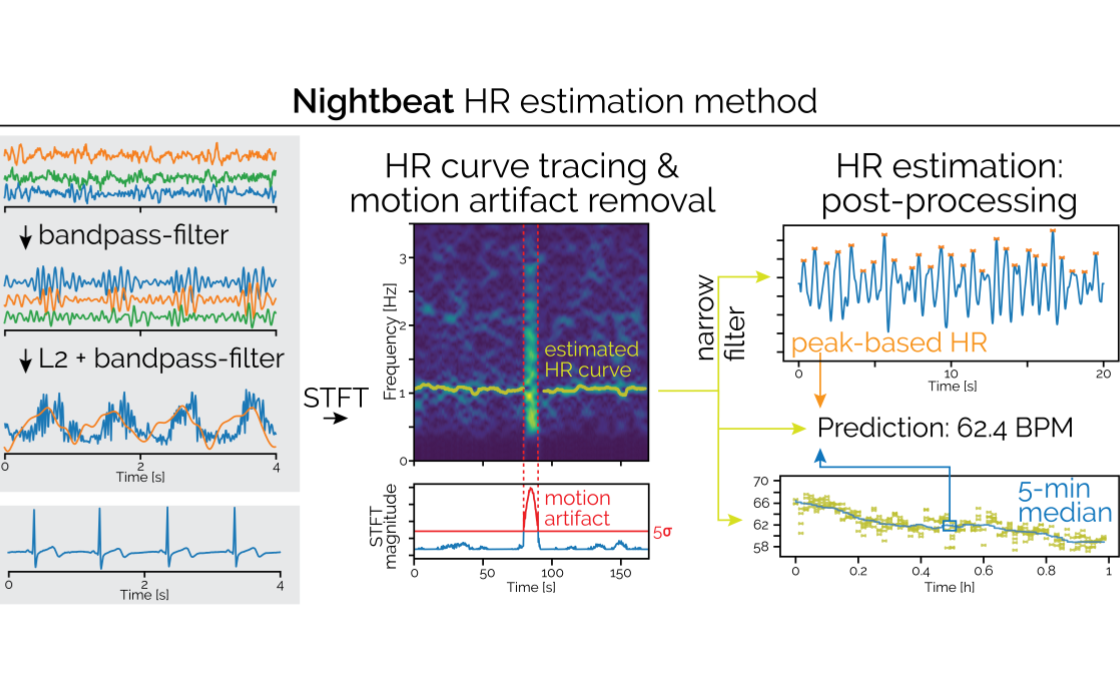

Nightbeat

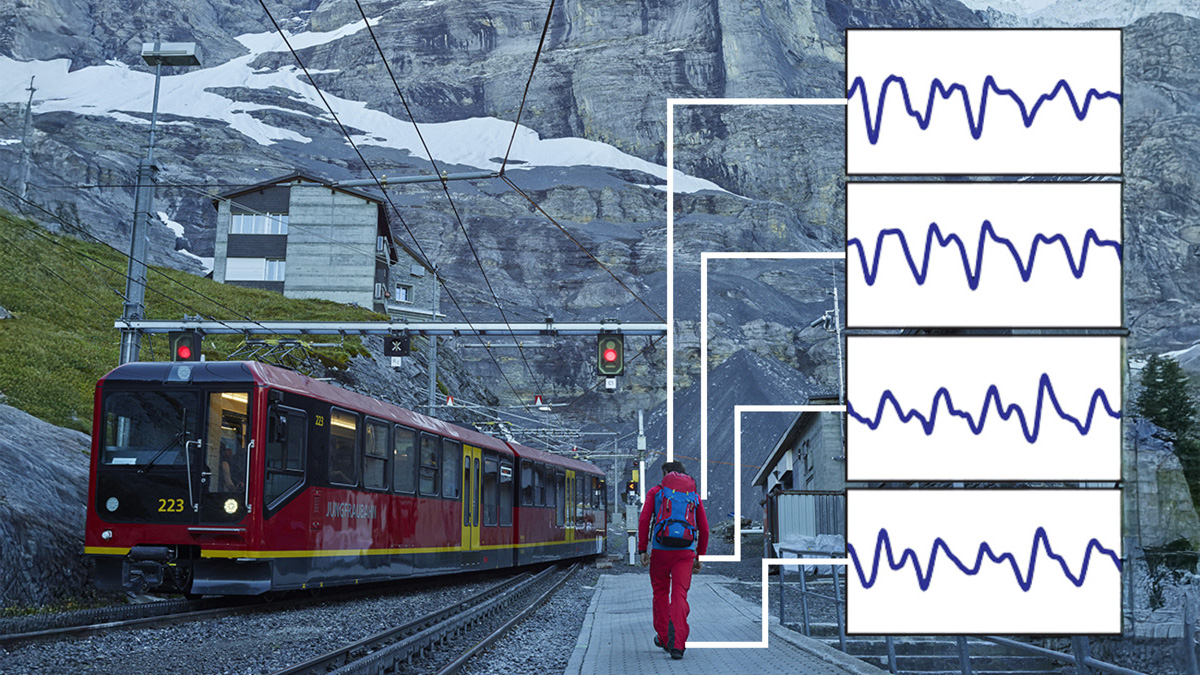

WildPPG

Respiro

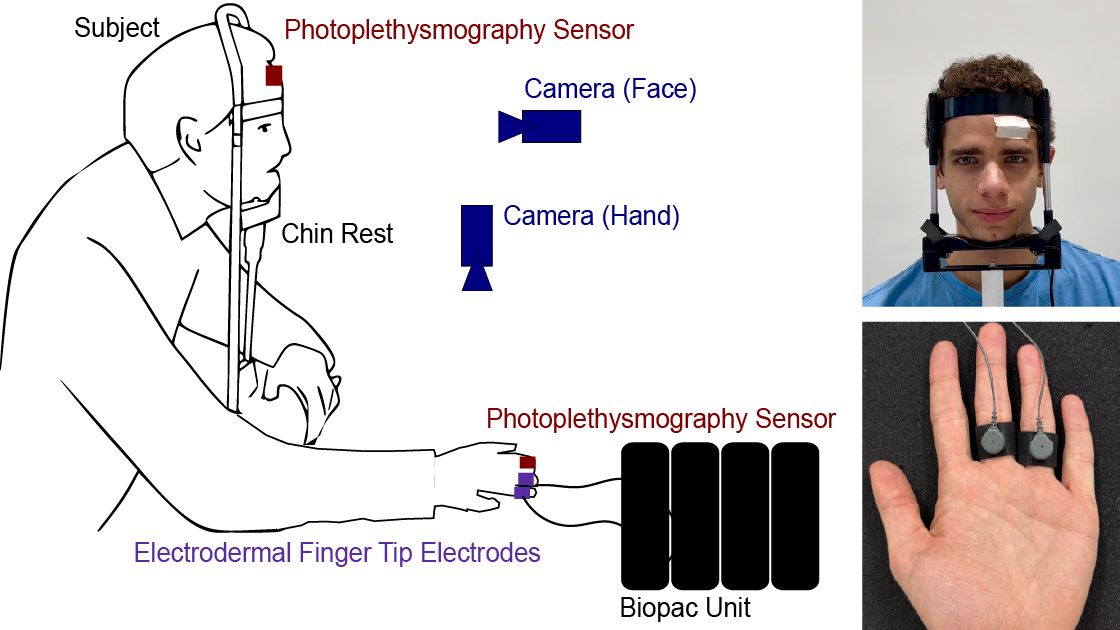

SympCam

Emotion Recognition for Chatbots

Tri-Spectral PPG

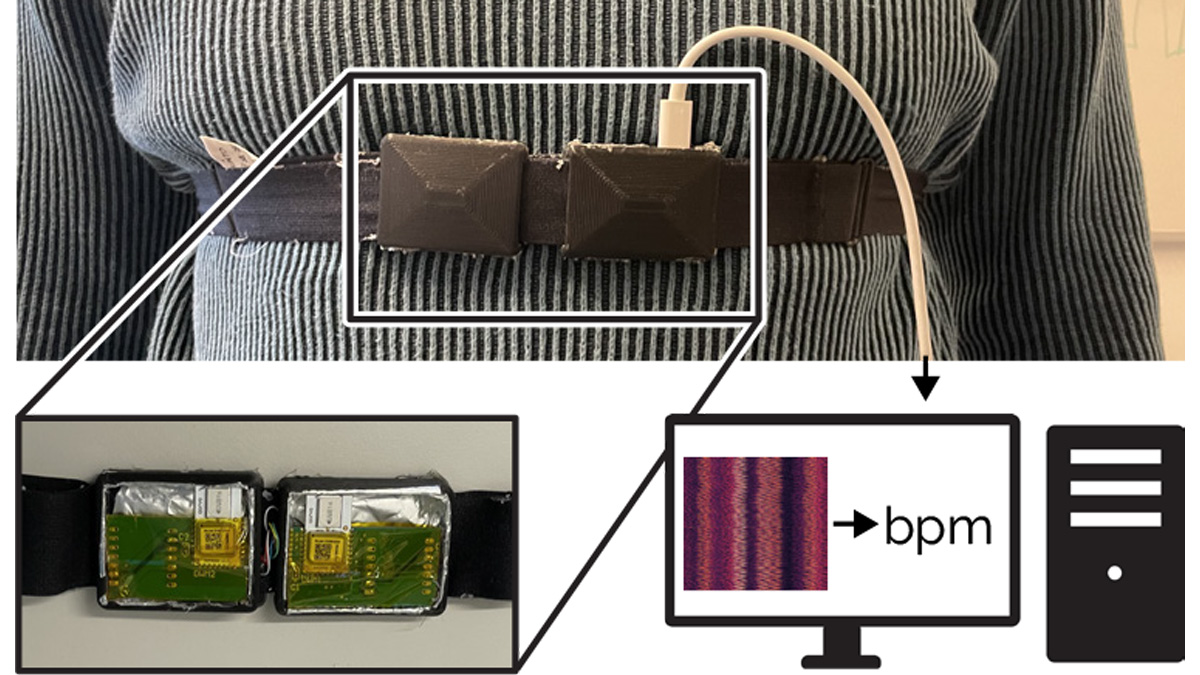

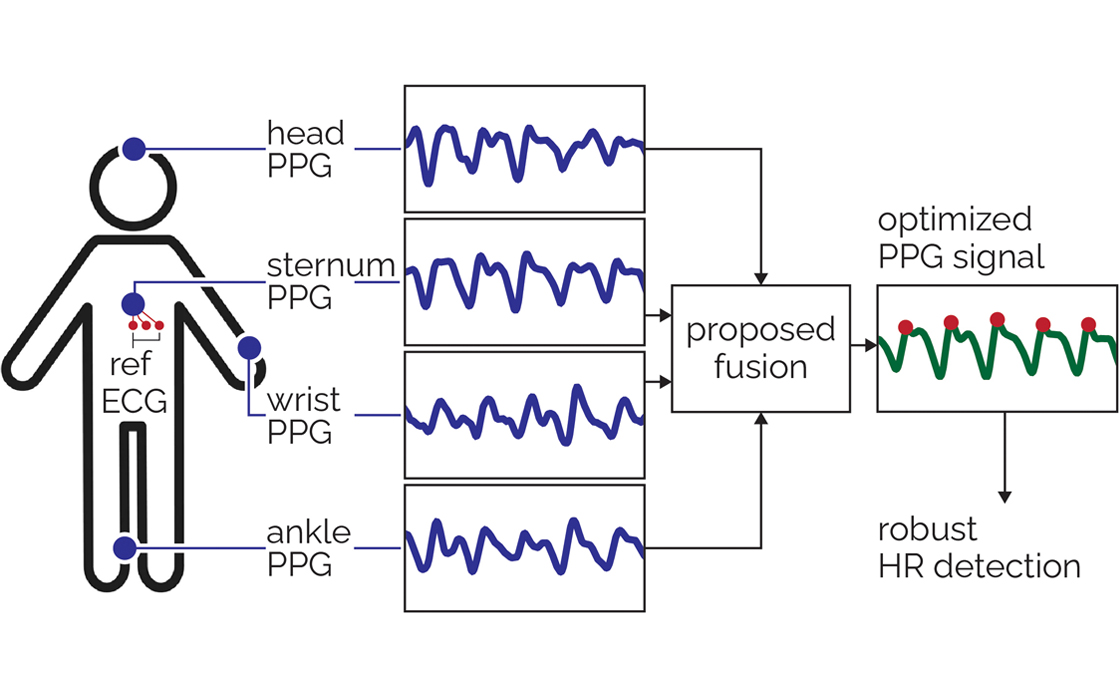

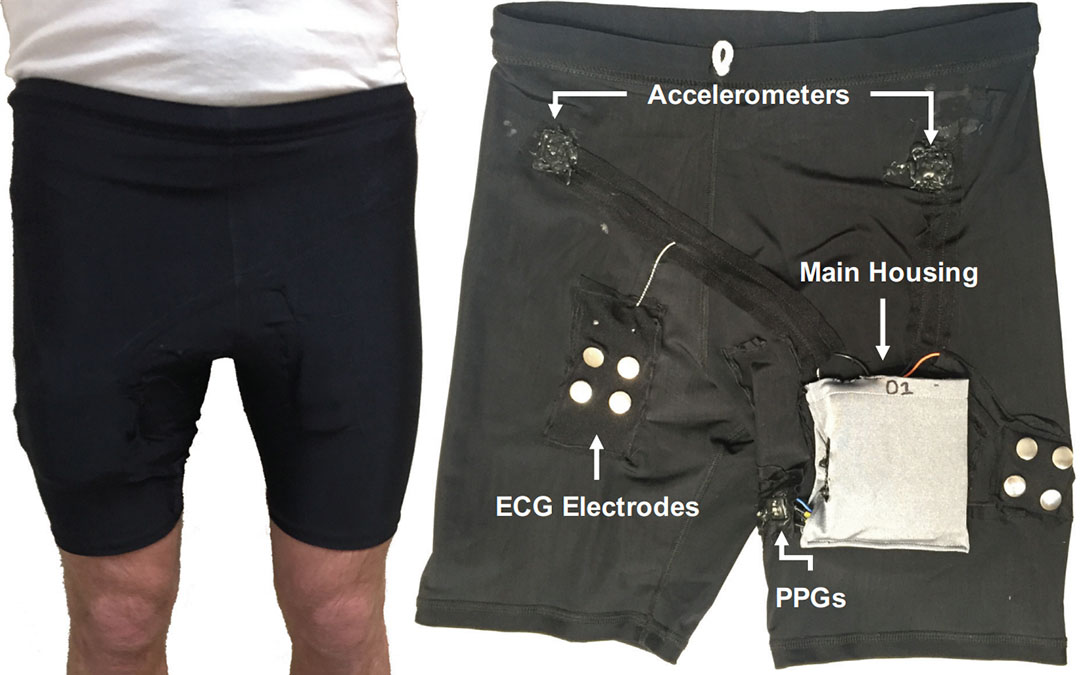

Multi-Site Photoplethysmography

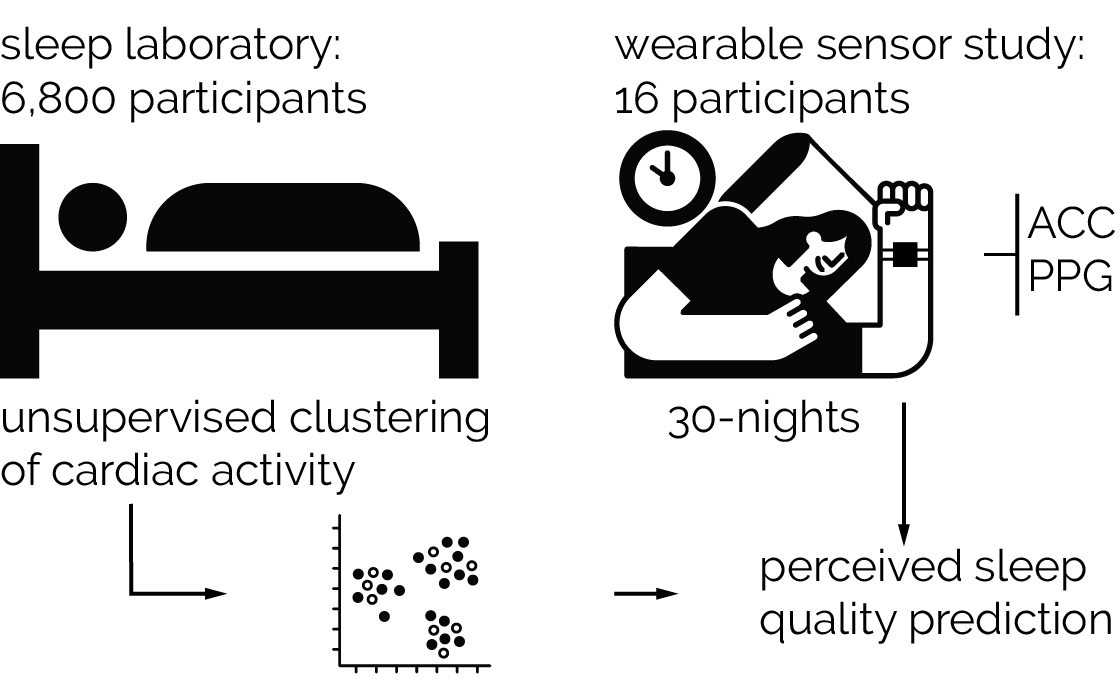

Unsupervised Sleep Quality Estimation

Predicting Perceived Sleep Quality

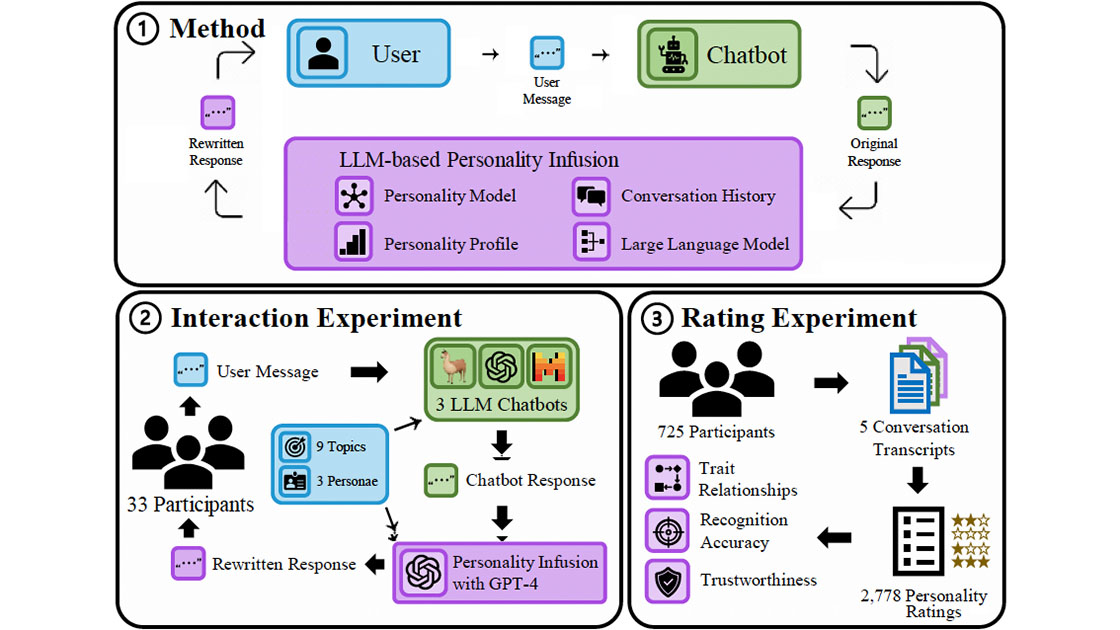

Chatbots With Attitude

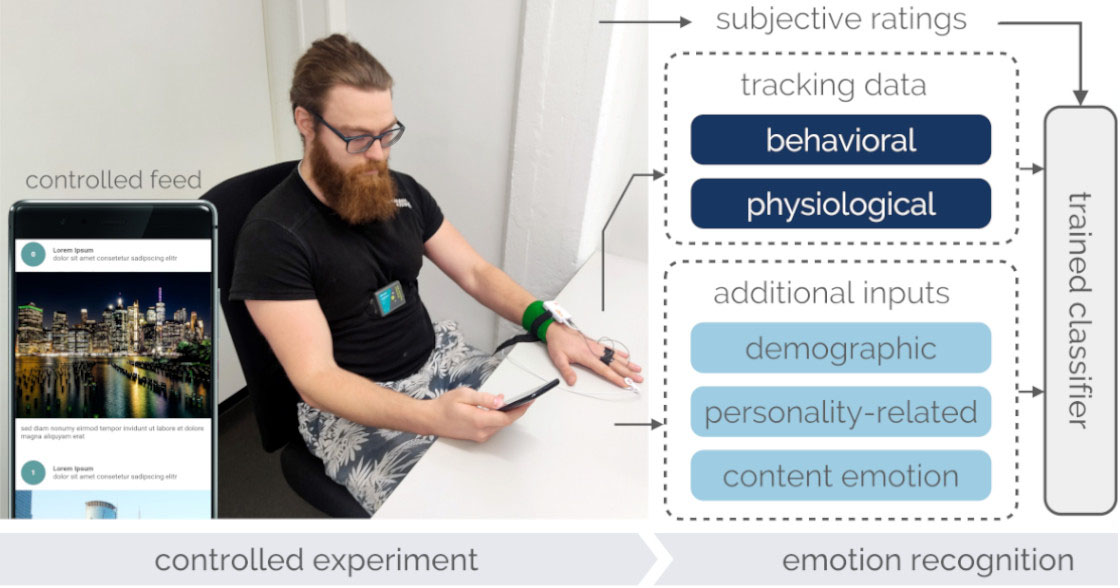

Detecting Users' Emotional States

GPT-3's Personality Dimensions

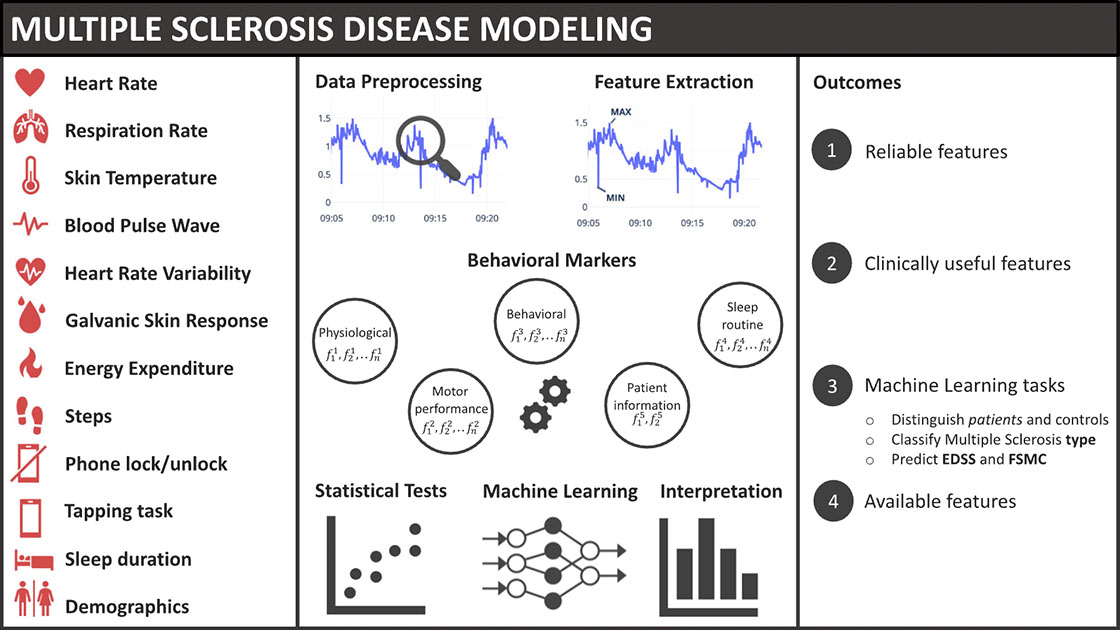

Modeling Multiple Sclerosis

Biomarkers for daily fatigue

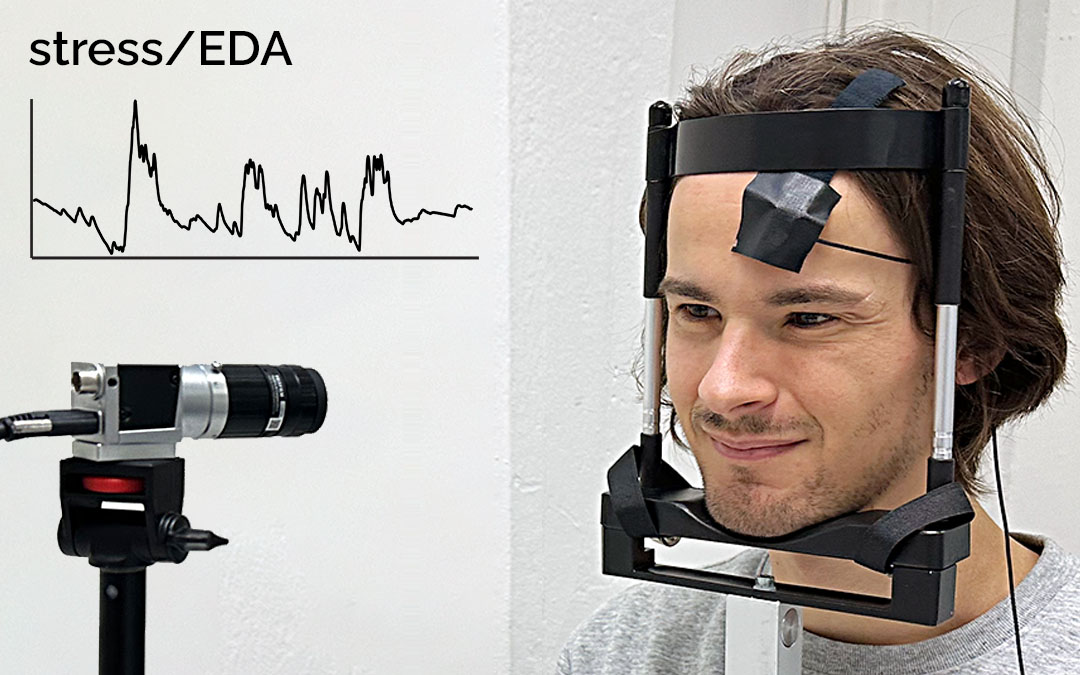

Video-based Sympathetic Arousal

BeliefPPG

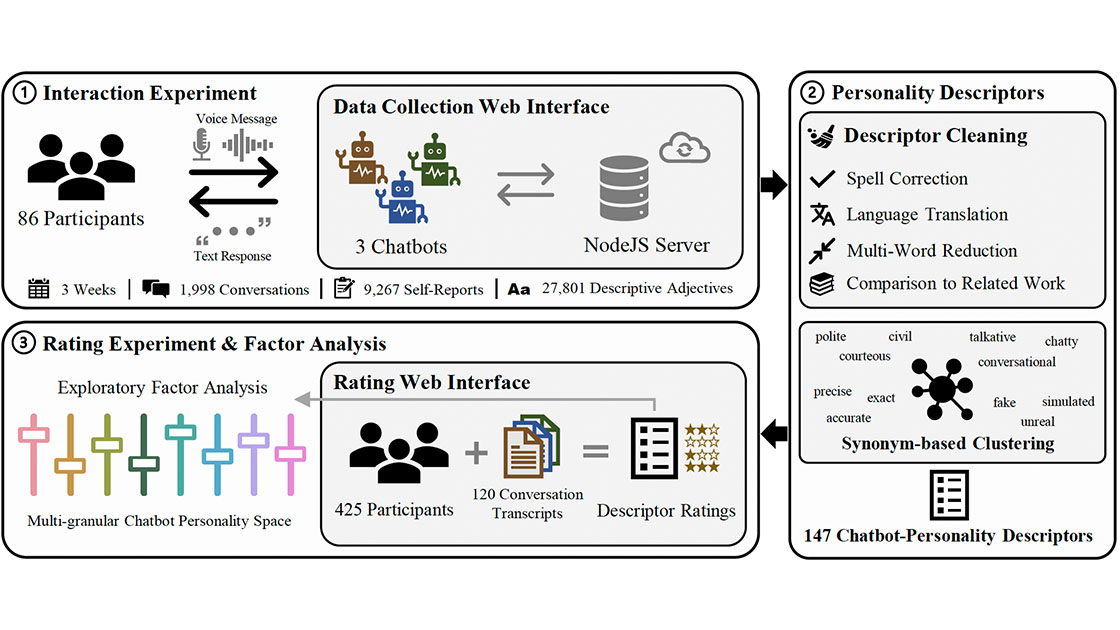

Personality Trait Recognition in the Wild

SSVEP Visual Stimuations vs. User-Friendliness in Virtual Reality

Physiological Responses to Fear, Frustration, and Insight

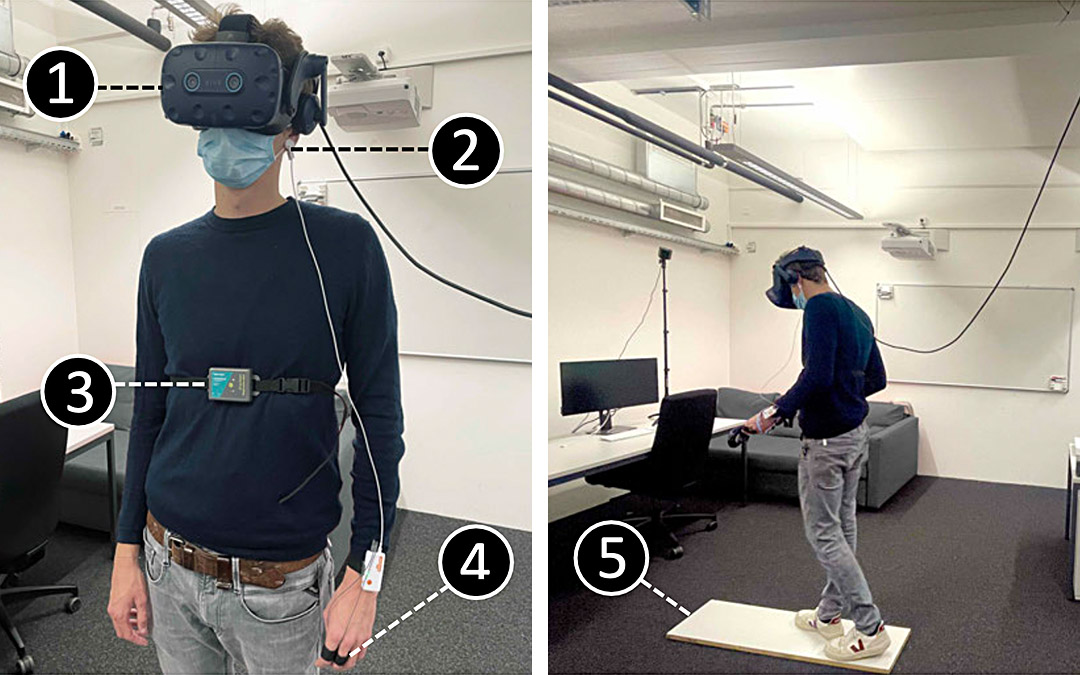

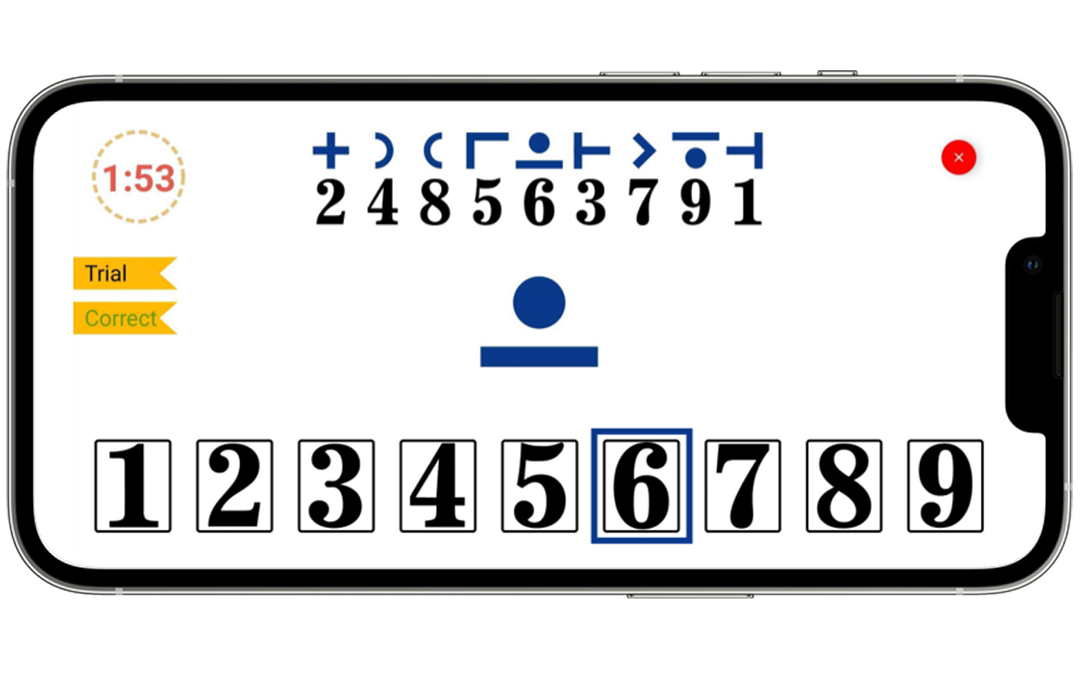

Cognitive Fatigability Assessment Test

Autonomic dysfunction in Multiple Sclerosis

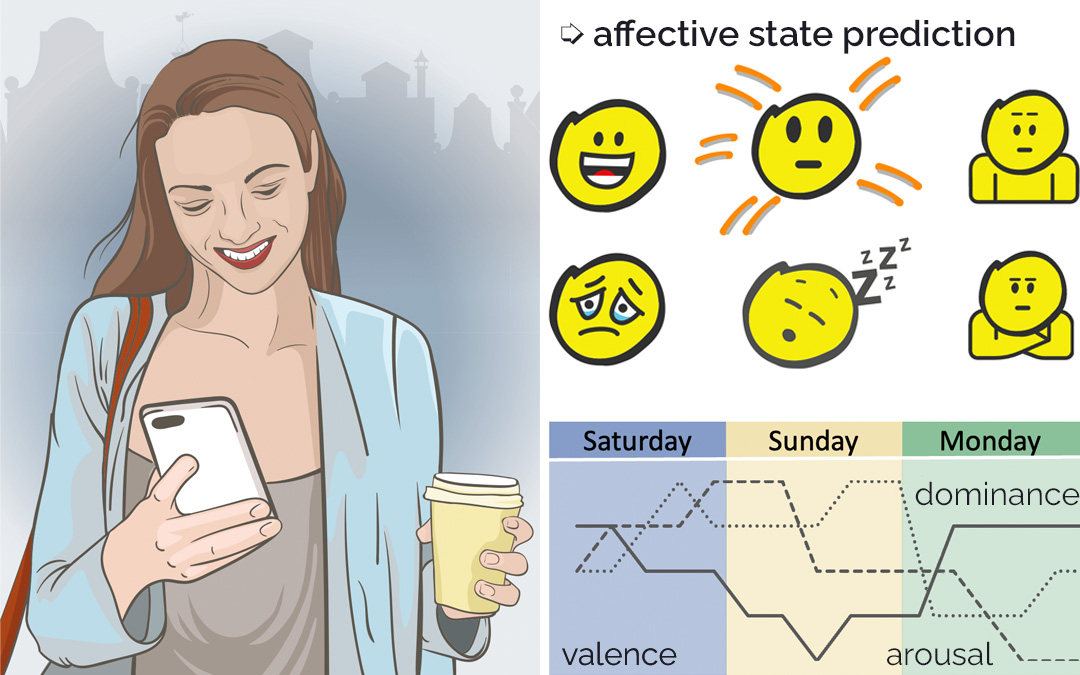

Affective State Prediction

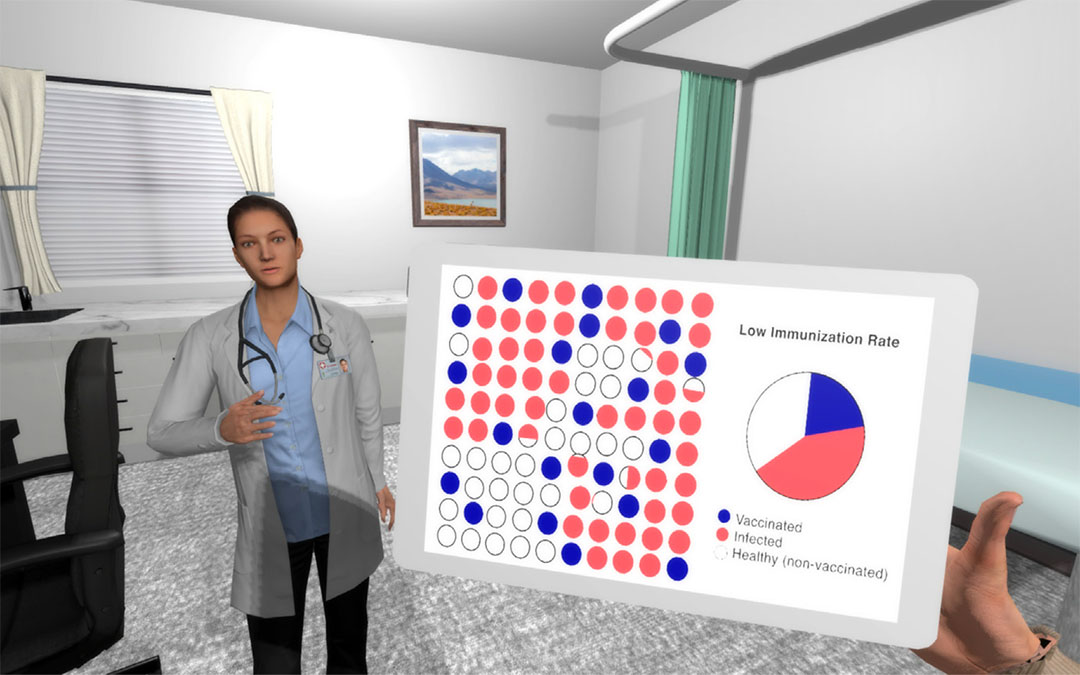

Virtual reality reduces COVID‑19 vaccine hesitancy

A self-administered virtual reality intervention increases COVID-19 vaccination intention

Predicting Fatigue from Fatigability

The Effect of Vibratory Stimuli

Assessing Fatigability in Multiple Sclerosis Patients

Naptics

Smartphone Pulse Oximetry

Glabella

DualBlink

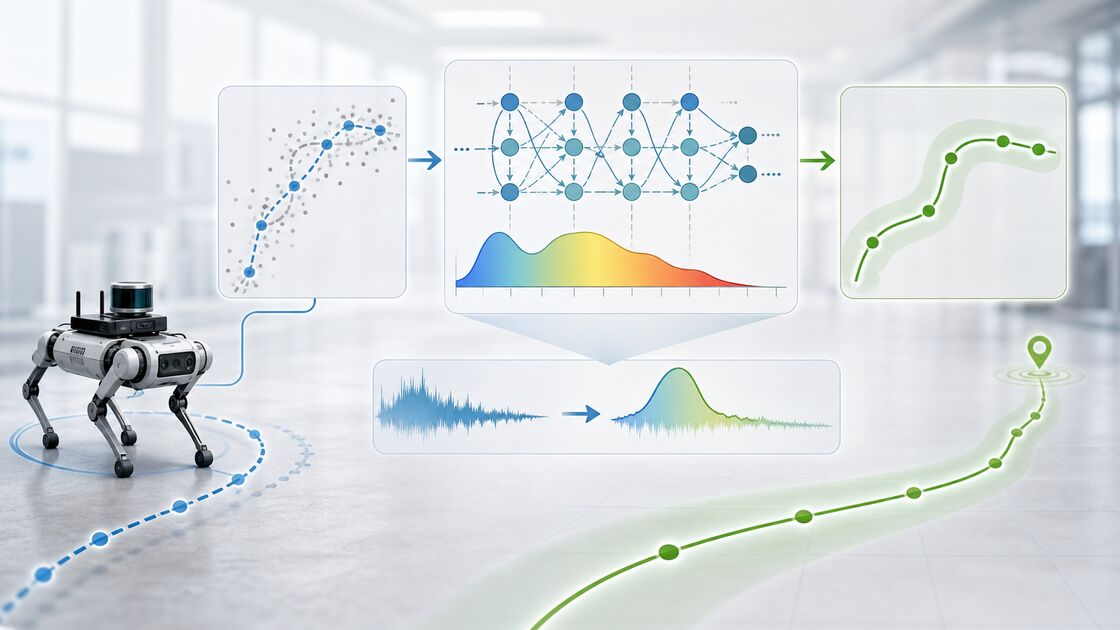

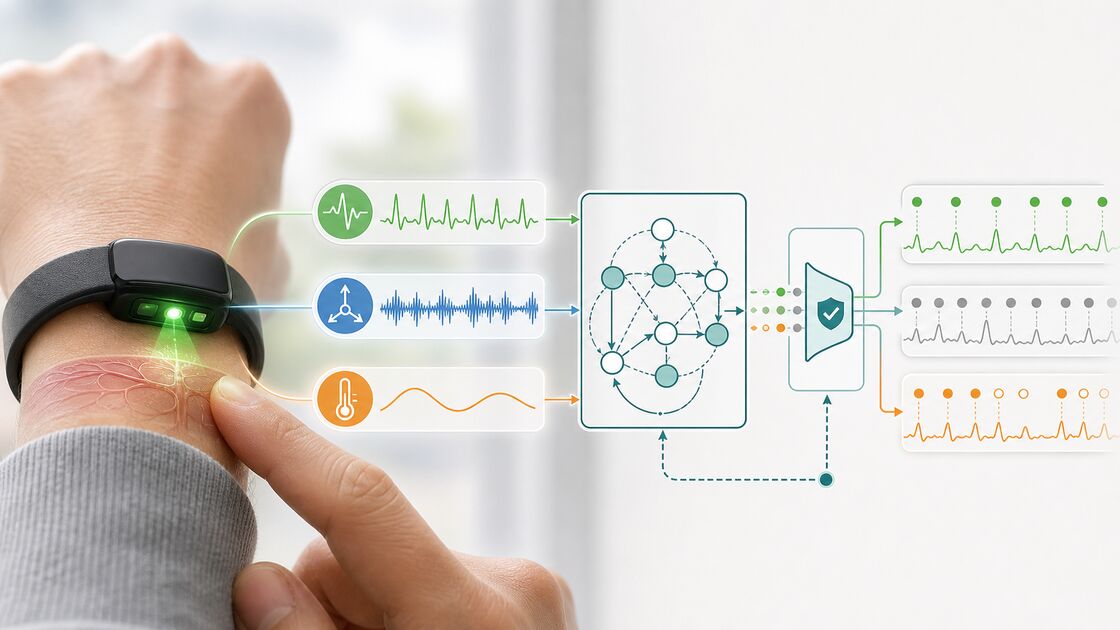

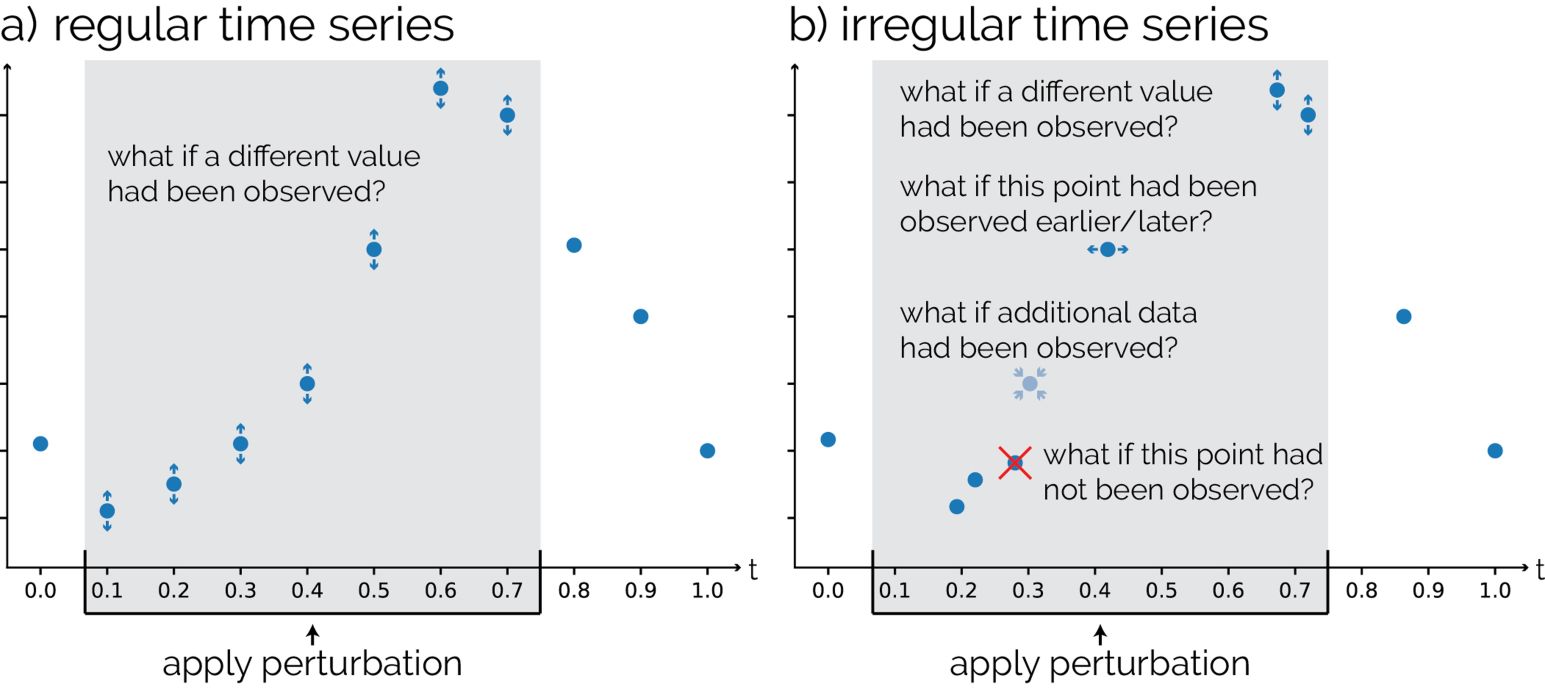

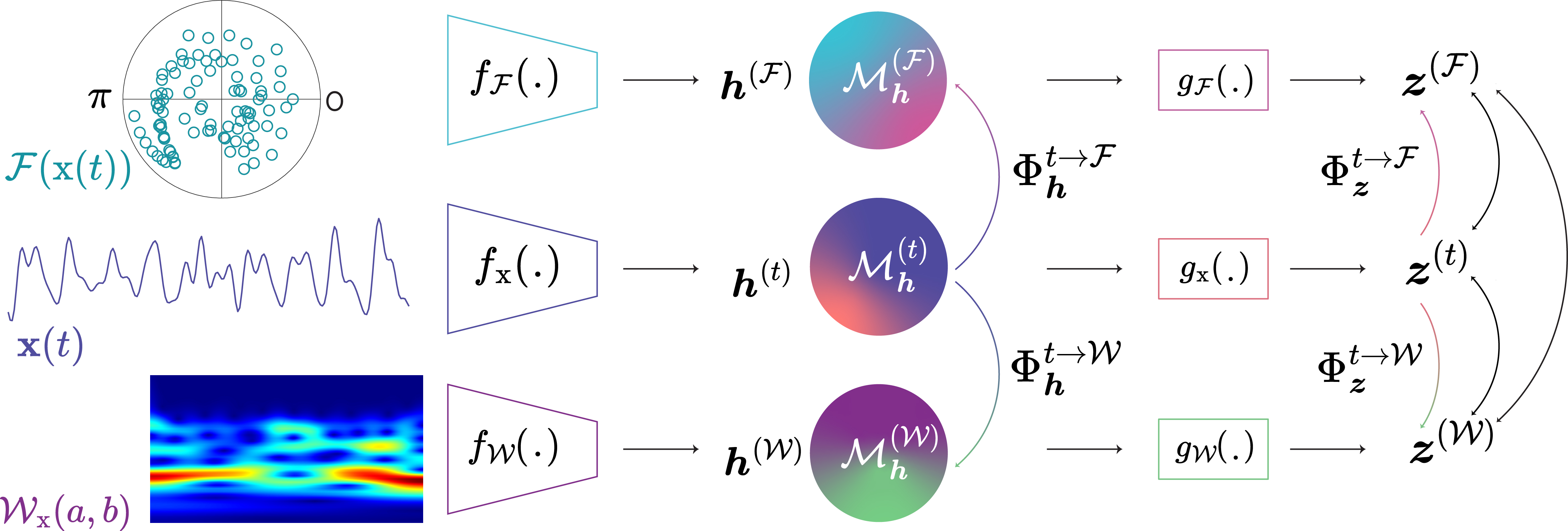

Sensor ML

Machine learning methods for robust inference from sensor signals, time series, and multimodal observations.